The Post-Hype Reality: Why the Era of “AI-Powered” is Over (And What Comes Next)

If your primary software vendor recently added a shiny, sparkle-icon button to their user interface, called it “AI-powered,” and subsequently increased your licensing fee by 20%, you are not alone. And you are not innovating. You are being taxed.

We have officially reached the peak of inflated expectations on the Gartner hype cycle, and the trough of disillusionment is right around the corner. Over the past two years, companies rushed to buy generative tools. The mandate from the board was simple: Do AI. So, management bought ChatGPT licenses, bolted a chatbot onto the customer service portal, and waited for the massive efficiency gains that the headlines promised.

Now, the CFO is asking for the receipts. And for most companies, those receipts are looking incredibly thin.

The era of the “AI-powered” wrapper is dead. What most people miss is that buying a tool is not the same as redesigning a business. If you are a CEO, CTO, or business leader in 2026, the question is no longer about which language model is the smartest. The real question is how you transition from buying shiny AI features to building fundamentally AI-native operating models. Let’s break down exactly what that looks like, and why the winners of the next decade are shifting their focus from experimentation to disciplined execution.

The Death of the “AI-Powered” Wrapper

Let’s be honest. Tacking the word “AI” onto a mediocre product doesn’t make it a good product. It just makes it an expensive one.

Between 2023 and 2025, the market was flooded with “wrappers.” These were essentially legacy software platforms that bolted an API connection to a large language model (LLM) onto their existing, clunky workflows. They didn’t change how the software worked; they just added a chat interface on top of it.

Why Bolting an LLM Onto a Legacy System Doesn’t Fix a Broken Process

Here is the catch with the wrapper strategy: if your underlying business process is broken, adding AI just helps you execute a broken process much faster.

Imagine a procurement department that requires seven different manual approvals, a labyrinth of email threads, and cross-referencing three outdated spreadsheets just to onboard a new vendor. An “AI-powered” wrapper might help an employee draft the vendor approval emails in five seconds instead of five minutes. Sounds great, right?

It’s not. The core friction—the seven approvals and the disconnected data silos—still exists. The AI didn’t solve the business problem; it just applied a temporary bandage to a symptom. This fundamental misunderstanding of workflow vs. technology is exactly why AI transformations fail before they ever reach scale. Companies try to force-fit a revolutionary technology into an evolutionary, outdated operational model.

Top management must stop buying technology that merely assists human bottlenecks. The goal isn’t to help your employees tolerate bad internal systems. The goal is to eliminate those systems entirely.

The Shift from “Copilots” to “Agents”

The first wave of AI adoption was defined by the “copilot.” A copilot is exactly what it sounds like: a digital assistant that sits next to a human operator, offering suggestions, auto-completing code, or summarizing meeting notes. Copilots are helpful, but they have a fatal flaw. They require constant, undivided human supervision.

The Adult in the Room: Moving to Autonomous Workflows

We are now transitioning out of the copilot era and into the agentic era. According to recent insights from Bain & Company, the timeline for transitioning from generative AI to autonomous agentic AI is accelerating faster than anticipated.

An AI agent doesn’t just draft an email; it receives an objective, plans a sequence of actions, logs into your CRM, updates the client record, drafts the communication, sends it, and logs the response—all without a human clicking “approve” at every single step.

But moving to autonomous agents requires adult supervision at the architectural level. You cannot let agents loose in your tech stack based on vague prompts and good vibes. You have to shift to rigid, spec-driven development. When an AI moves from advising a human to executing actions on behalf of the company, the engineering standards must elevate. CTOs must build deterministic rails around probabilistic models. If you don’t, you aren’t building a digital workforce; you are building a liability.

The CFO’s Dilemma: Measuring Real ROI in the Post-Hype Era

If there is one person in the C-suite who is immune to the AI hype, it is the Chief Financial Officer. The CFO does not care if an AI model can write a sonnet in the style of Shakespeare. The CFO cares about margin expansion, cost-to-serve, and revenue growth.

Right now, enterprise leaders are drowning in what MIT Sloan calls “soft ROI.” Soft ROI is the illusion of productivity.

Why Saving 3 Hours a Week Means Nothing

Software vendors love to sell soft ROI. Their pitch sounds like this: “Our AI-powered tool will save every employee on your team three hours a week!”

The management team hears this, multiplies three hours by 500 employees, multiplies that by the average hourly wage, and calculates a massive, multi-million dollar return on investment. They sign the contract. A year later, they look at the balance sheet. Revenue hasn’t gone up. Headcount costs haven’t gone down. The multi-million dollar ROI is nowhere to be found.

What most people miss is the efficiency paradox. If you save an employee three hours a week, and you do not systematically redirect those three hours into a tracked, revenue-generating activity, you haven’t saved the company a single dollar. You have simply subsidized your employee’s free time. They are going to spend those three hours scrolling LinkedIn or taking a longer lunch.

In the post-hype reality, top management must demand hard ROI. You measure this by tracking concrete metrics:

-

Reduction in cost-per-transaction

-

Deflection rate of Tier-1 support tickets

-

Accelerated time-to-market for new code deployments

-

Direct increase in outbound sales conversion rates

If your AI implementation strategy does not tie directly to one of these hard metrics, it is a research project, not a business strategy.

Rebuilding the Stack: What CTOs Actually Need to Focus On

While the CEO and CFO are arguing over business metrics, the CTO is left holding a fragmented, chaotic tech stack. During the hype cycle, engineering teams were pressured to stand up AI features quickly to appease the board. This led to a massive accumulation of technical debt.

Designing for Context Retention and Avoiding the Hallucination Trap

The mandate for technology leaders today is to stop building shiny front-end chat interfaces and start fixing the backend data architecture.

Harvard Business Review notes that the primary bottleneck for enterprise AI deployment is no longer the intelligence of the model, but the quality of the proprietary data feeding it. If your internal data is unstructured, siloed, and full of conflicting information, your AI agent will be confident, articulate, and completely wrong.

Furthermore, as you deploy agents to execute workflows, CTOs must guard against instruction misalignment. This occurs when an AI system technically follows the prompt it was given but completely violates the intent of the business rule because it lacks structural context.

To rebuild the stack for the post-hype era, CTOs need to focus on three critical pillars:

-

Unified Data Lakes: AI cannot reason across systems if your marketing data lives in HubSpot, your financial data in Oracle, and your product data in Jira, with no connective tissue between them.

-

Retrieval-Augmented Generation (RAG) Integrity: Ensuring the system pulls the correct, most recent internal documentation before it generates an answer or takes an action.

-

Auditability: When an agentic system makes a mistake—and it will—your engineers must be able to trace the exact logical path the model took to reach that conclusion. Black-box decision-making is unacceptable in an enterprise environment.

The New Mandate for Top Management

You cannot delegate a fundamental business transformation to a mid-level IT manager.

According to McKinsey, organizations where the CEO actively champions and tracks the AI strategy achieve a 20% higher return on their digital investments compared to companies where the strategy is outsourced to siloed departments.

Redesigning Headcount and Owning the Strategy

The era of “AI-powered” tools allowed management to be passive. You bought a software license, handed it to the marketing team, and crossed your fingers. The era of AI-native operating models requires top management to be aggressively active.

You have to rethink headcount. If AI agents are now capable of handling 40% of your routine data processing and initial customer triage, you do not necessarily need to fire 40% of your staff. But you absolutely must redesign their roles. Your human workforce needs to transition from “doers” of repetitive tasks to “managers” of digital agents.

This requires a massive upskilling initiative focused on systems thinking. Your team needs to know how to validate AI outputs, how to structure complex workflows, and how to intervene when an autonomous agent encounters an edge case it cannot solve.

The real win here is not replacing human intelligence; it is elevating it. When you strip away the administrative burden of the modern workday, you free your best talent to focus on high-judgment, high-empathy, and high-strategy work—the things machines still cannot do.

Move the Needle

The hype cycle was loud, chaotic, and largely unproductive. But the post-hype reality is where the actual fortunes will be made.

The winners of the next decade won’t be the companies that brag about how many AI tools they bought. They will be the companies that quietly and methodically redesigned their core business processes around autonomous workflows, demanded hard financial returns, and treated AI not as a feature, but as a foundation.

Stop buying into the “AI-powered” marketing noise. Realign your executive team, clean up your data architecture, and focus entirely on execution. The technology is finally ready. The real question is: are you?

Frequently Asked Questions

1. What is the difference between AI copilots and autonomous AI agents?

AI copilots act as digital assistants that require constant human prompting, supervision, and approval to complete tasks. Autonomous AI agents, however, are given a high-level objective and can independently plan, execute, and course-correct multi-step workflows across an organization's tech stack with minimal human intervention.

2. How can executives measure the true ROI of enterprise AI?

True enterprise AI ROI must be measured through "hard" metrics rather than "soft" time-saving estimates. CFOs should track concrete data points like reduction in cost-per-transaction, deflection rate of Tier-1 support tickets, accelerated time-to-market for code deployments, and direct increases in outbound sales conversion rates.

3. Why are most "AI-powered" software wrappers failing to deliver value?

"AI wrappers" simply bolt a large language model (LLM) onto legacy software to help employees execute outdated processes slightly faster. They fail to deliver scalable value because they treat the symptoms of bad operational design rather than fundamentally fixing the underlying broken business workflows.

4. What does it mean to build an AI-native operating model?

An AI-native operating model means redesigning your core business processes from the ground up with the assumption that autonomous digital agents will execute the majority of routine tasks. It requires unified data architecture, strict output auditability, and a human workforce upskilled to manage systems rather than execute manual inputs.

5. How should a CTO prepare our data architecture for enterprise AI?

CTOs must transition away from siloed applications and build unified data lakes. For AI to execute complex reasoning, it requires structured, clean, and highly integrated proprietary data. Additionally, CTOs must implement robust Retrieval-Augmented Generation (RAG) frameworks to ensure agents pull the most accurate internal context before making decisions.

6. What are the biggest risks of deploying autonomous AI agents?

The primary risk is instruction misalignment, where an agent follows a technical prompt but violates the intent of the business rule due to a lack of structural context. Other major risks include confident data hallucinations and the accumulation of technical debt from rushing deployments without proper governance and auditability frameworks.

7. Why should the CEO lead the AI strategy instead of the IT department?

When AI is delegated solely to IT, it becomes a siloed technology project rather than a strategic transformation. Because shifting to agentic AI deeply impacts business models, headcount costs, and corporate risk profiles, the CEO must own the strategy to ensure technology deployments align perfectly with top-line revenue goals.

8. What is the "efficiency paradox" in AI implementation?

The efficiency paradox occurs when a company uses AI to save an employee several hours a week, but fails to systematically redirect that saved time into revenue-generating activities. As a result, the company incurs the cost of the AI software without actually saving any money or increasing overall output.

9. How do we transition from vibe coding to spec-driven AI development?

Transitioning requires moving away from casual, prompt-based experimentation ("vibe coding") and enforcing rigorous engineering standards. CTOs must build deterministic guardrails around probabilistic AI models, ensuring that every autonomous action is trackable, auditable, and tied to strict business logic specifications.

10. How do we upskill our workforce for the agentic AI era?

Top management must shift their training focus from basic "prompt engineering" to "systems thinking." Employees need to be upskilled to act as managers and auditors of digital agents—learning how to validate AI outputs, design logical workflows, and intervene effectively when an autonomous system encounters a complex edge case.

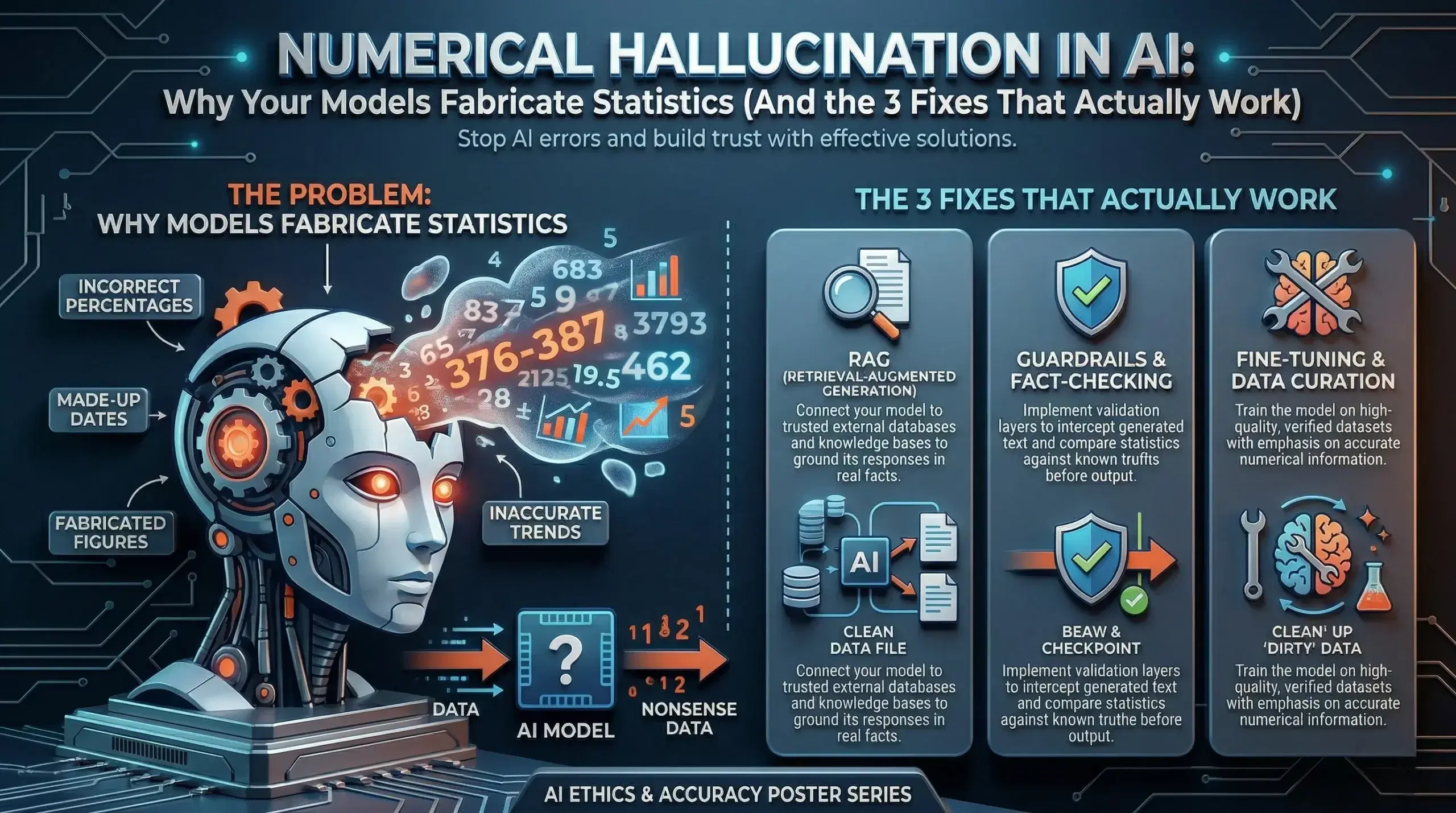

Numerical Hallucination in AI: Why Your Model Is Lying About Numbers (And What to Do About It)

Here’s something that should make every business leader pause: your AI system might be confidently wrong — and you’d never know by reading the output.

Not wrong in an obvious way. Not a garbled sentence or a broken response. Wrong in the worst possible way — a number that looks real, sounds authoritative, and passes straight through your team’s review process. That’s numerical hallucination in AI, and it’s one of the most underestimated risks in enterprise AI adoption today.

If your business uses AI to generate reports, financial summaries, research insights, or any data-driven content, this isn’t a theoretical problem. It’s a real one, and it’s happening right now in systems across industries.

Let’s break down exactly what it is, why it happens, and — more importantly — how you fix it.

What Is Numerical Hallucination in AI?

Numerical hallucination in AI is when a language model generates incorrect numbers, statistics, percentages, or calculations — and presents them as fact.

The model doesn’t “know” it’s wrong. That’s what makes this so dangerous. AI language models are trained to predict what text should come next based on patterns. When you ask a model a quantitative question, it generates what a plausible answer looks like — not what the actual answer is.

The result? Things like:

- “India’s literacy rate is 91%.” (The actual figure from credible government data is closer to 77–78%.)

- An AI-generated financial projection that inflates a 3-year growth rate by 15 percentage points.

- A market research summary that cites a statistic from a study that doesn’t exist.

These aren’t typos. They’re confident, fluent, and completely fabricated — and that combination is what makes quantitative AI errors so costly.

Why Does AI Hallucinate Numbers Specifically?

This is the part most AI explainers skip, and it’s worth understanding if you’re making decisions about AI deployment.

Language models learn from text. Enormous amounts of it. But text doesn’t always contain verified numerical data. A model trained on web content has seen millions of sentences with numbers — some accurate, many outdated, some just plain wrong. The model doesn’t store a database of facts. It stores patterns of how information is expressed.

So when you ask “What is the global e-commerce market size?”, the model doesn’t look it up. It generates a number that fits the expected shape of that kind of answer. If the training data contained that figure cited as “$4.9 trillion” in some contexts and “$6.3 trillion” in others, the model may generate either — or something in between.

There are a few specific reasons AI models struggle with quantitative accuracy:

No grounded memory. Standard large language models don’t have access to live databases. They’re working from a frozen snapshot of training data.

Numerical interpolation. Models sometimes blend or interpolate between different figures they’ve seen during training, producing numbers that feel statistically plausible but aren’t tied to any real source.

Overconfidence without verification. Unlike a human analyst who would flag uncertainty, an AI model presents all outputs with the same confident tone — whether it’s correct or not.

Outdated training data. If a model’s training data cuts off in 2023, and you’re asking about 2024 market figures, the model will still generate something — it just won’t be grounded in anything real.

This is why statistical errors in AI systems aren’t random flukes. They’re structural. And they require structural fixes.

The Real Cost of Quantitative AI Errors in Business

Let’s be honest — if an AI writes an oddly phrased sentence, someone catches it. But when an AI generates a plausible-looking number in a market analysis or quarterly report, most teams don’t question it.

Here’s what that looks like in practice:

A strategy team uses an AI-generated competitive analysis. The model cites a competitor’s market share as 34%. The real figure is 21%. Pricing decisions, positioning, and resource allocation get shaped around a number that was never real.

Or consider a healthcare organisation using AI to summarise clinical data. An incorrect dosage percentage slips through. The downstream consequences in that kind of environment don’t need spelling out.

Incorrect financial projections from AI models have already influenced board-level discussions in enterprise companies. The damage isn’t always visible immediately — that’s what makes it compound over time.

This is the operational risk that most AI adoption frameworks underestimate. And it’s the reason AI accuracy validation has to be built into deployment, not bolted on after the fact.

3 Proven Fixes for Numerical Hallucination in AI

The good news is this problem is solvable. Not perfectly, not with a single toggle — but systematically, with the right architecture.

Fix 1: Tool Integration — Connect AI to Real Data Sources

The most direct fix for AI generating false numbers is to stop asking it to recall numbers at all.

When AI models are connected to live tools — calculators, databases, APIs, or retrieval systems — they stop generating numerical answers from memory. Instead, they pull real figures from verified sources and present those.

Think of it like the difference between asking someone to recall a phone number from memory versus handing them a phone book. The output reliability changes completely.

This is what’s often called Retrieval-Augmented Generation (RAG) for structured data — and for any business-critical numerical output, it should be the baseline, not the exception.

If your AI deployment is generating financial data, compliance figures, or statistical summaries without being grounded to a live data source, that’s a structural gap. Not a model limitation — a deployment design gap.

Fix 2: Structured Numeric Validation

Even when AI models are well-designed, errors can slip through. Structured numeric validation adds a verification layer that catches quantitative inconsistencies before they reach end users.

This works in a few ways:

- Range checks — If an AI model generates a figure that falls outside a statistically reasonable range for that metric, the system flags it.

- Cross-reference validation — The generated number is compared against a known baseline or dataset before being output.

- Confidence tagging — AI systems can be configured to attach uncertainty signals to numerical claims, prompting human review when confidence is low.

This kind of AI output validation is particularly important in regulated industries — financial services, healthcare, legal — where a single incorrect figure can trigger compliance issues or erode trust instantly.

The key shift here is moving from treating AI output as final to treating it as a first draft that passes through validation before it matters.

Fix 3: Grounded Data Retrieval

Grounded data retrieval means designing your AI system so that every significant numerical claim has a retrievable, attributable source — not just a generated output.

This goes beyond basic RAG. Grounded retrieval means the AI system cites where a number came from, and that citation is verifiable. If the system can’t find a grounded source for a figure, it says so — rather than filling the gap with a plausible-sounding fabrication.

For enterprise teams, this changes the accountability model for AI-generated content. Instead of “the AI said this,” your team can say “this figure came from [source], retrieved on [date].” That’s the difference between AI as a liability and AI as a trustworthy analytical tool.

Grounded data retrieval is especially important in AI applications for knowledge management, market intelligence, and regulatory reporting — three areas where the cost of an AI accuracy problem is highest.

What This Means for Leaders Deploying AI

If you’re a CTO, CDO, or business leader evaluating or scaling AI systems right now, here’s the real question: how does your current AI deployment handle numerical outputs?

If the answer is “the model generates them,” that’s the gap.

The organisations that are getting the most value from AI right now aren’t the ones running the most powerful models. They’re the ones that have built the right guardrails — verification layers, grounded data pipelines, and structured validation — so their AI outputs are trustworthy at scale.

Numerical hallucination in AI isn’t an argument against using AI. It’s an argument for using it correctly.

The difference between an AI system that creates risk and one that creates value is often not the model itself. It’s the architecture around it.

The Bottom Line

AI language models are not databases. They don’t recall facts — they generate plausible text. For most tasks, that’s good enough. For anything numerical, that distinction is critical.

The fix isn’t to avoid AI for quantitative work. The fix is to build AI systems where numbers are retrieved, not recalled — validated, not assumed — and always traceable to a real source.

If you’re building or scaling AI systems in your organisation and want to get the architecture right from the start, that’s exactly what we help with at Ai Ranking. Because a confident AI that’s confidently wrong is worse than no AI at all.

Read More

Ysquare Technology

09/04/2026

Why AI Agents Drown in Noise (And How Digital RAS Filters Save Your ROI)

You gave your AI agent access to everything. Every document. Every Slack message. Every PDF your company ever produced. You scaled the context window from 32k tokens to 128k, then to a million.

And somehow, it got worse.

Your agent starts strong on a task, then by step three, it’s summarizing the marketing team’s holiday schedule instead of the Q3 sales data you asked for. It hallucinates facts. It drifts off course. It burns through your token budget processing irrelevant footnotes and disclaimers that add zero value to the output.

Here’s what most people miss: the problem isn’t that your AI doesn’t have enough context. The problem is it doesn’t know what to ignore.

We’ve built incredible digital brains, but we forgot to give them a brainstem. We’re facing a massive signal-to-noise problem, and the industry’s solution—making context windows bigger—is like turning up the volume when you can’t hear over the crowd. It doesn’t help. It makes things worse.

Let’s talk about why your AI agents are drowning in noise, what your brain does that they don’t, and how to build the filtering system that separates high-value signals from expensive junk.

The Context Window Trap: More Data Doesn’t Mean Better Decisions

The prevailing assumption in most boardrooms is simple: more access equals better intelligence. If we just give the AI “all the context,” it’ll naturally figure out the right answer.

It doesn’t.

Why 1 Million Token Windows Still Produce Hallucinations

Here’s the uncomfortable truth: research shows that hallucinations cannot be fully eliminated under current LLM architectures. Even with enormous context windows, the average hallucination rate for general knowledge sits around 9.2%. In specialized domains? Much worse.

The issue isn’t capacity—it’s attention. When an agent “sees” everything, it suffers from the same cognitive overload a human would face if you couldn’t filter out background noise. As context windows expand, models can start to overweight the transcript and underuse what they learned during training.

DeepMind’s Gemini 2.5 Pro supports over a million tokens, but begins to drift around 100,000 tokens. The agent doesn’t synthesize new strategies—it just repeats past actions from its bloated context history. For smaller models like Llama 3.1-405B, correctness begins to fall around 32,000 tokens.

Think about that. Models fail long before their context windows are full. The bottleneck isn’t size—it’s signal clarity.

The Hidden Cost of Processing “Sensory Junk”

Every time your agent processes a chunk of irrelevant text, you’re paying for it. You are burning budget processing “sensory junk”—irrelevant paragraphs, disclaimers, footers, and data points—that add zero value to the final output.

We’re effectively paying our digital employees to read junk mail before they do their actual work.

When you ask an agent to analyze three months of sales data and draft a summary, it shouldn’t be wading through every tangential Confluence page about office snacks or outdated onboarding docs. But without a filter, the noise is just as loud as the signal.

This is the silent killer of AI ROI. Not the flashy failures—the quiet, invisible drain of processing costs and degraded accuracy that compounds over thousands of queries.

What Your Brain Does That Your AI Agent Doesn’t

Your brain processes roughly 11 million bits of sensory information per second. You’re aware of about 40.

How? The Reticular Activating System (RAS)—a pencil-width network of neurons in your brainstem that acts as a gatekeeper between your subconscious and conscious mind.

The Reticular Activating System Explained in Plain English

The RAS is a net-like formation of nerve cells lying deep within the brainstem. It activates the entire cerebral cortex with energy, waking it up and preparing it for interpreting incoming information.

It’s not involved in interpreting what you sense—just whether you should pay attention to it.

Right now, you’re not consciously aware of the feeling of your socks on your feet. You weren’t thinking about the hum of your HVAC system until I mentioned it. Your RAS filtered those inputs out because they’re not relevant to your current goal (reading this article).

But if someone says your name across a crowded room? Your RAS snaps you to attention instantly. It’s constantly scanning for what matters and discarding what doesn’t.

Selective Ignorance vs. Total Awareness

Here’s the thing: without the RAS, your brain would be paralyzed by sensory overload. You wouldn’t be able to function. You would be awake, but effectively comatose, drowning in a sea of irrelevant data.

That’s exactly what’s happening to AI agents right now.

We’re obsessed with giving them total awareness—massive context windows, sprawling RAG databases, access to every system and document. But we’re not giving them selective ignorance. We’re not teaching them what to filter out.

When agents can’t distinguish signal from noise, they become what we call “confident liars in your tech stack”—producing outputs that sound authoritative but are fundamentally wrong.

Three Ways Noise Kills AI Agent Performance

Let’s get specific. Here’s exactly how information overload destroys your AI agents’ effectiveness—and your budget.

Hallucination from Pattern Confusion

When an agent is drowning in data, it tries to find patterns where none exist. It connects dots that shouldn’t be connected because it cannot distinguish between a high-value signal (the Q3 financial report) and low-value noise (a draft email from 2021 speculating on Q3).

The agent doesn’t hallucinate because it’s creative. It hallucinates because it’s confused.

Poor retrieval quality is the #1 cause of hallucinations in RAG systems. When your vector search pulls semantically similar but irrelevant documents, the agent fills gaps with plausible-sounding nonsense. And because language models generate statistically likely text, not verified truth, it sounds perfectly reasonable—even when it’s completely wrong.

Task Drift and Goal Abandonment

You give your agent a multi-step goal: “Analyze last quarter’s customer support tickets and identify the top three product issues.”

Step one: pulls support tickets. Good.

Step two: starts analyzing. Still good.

Step three: suddenly summarizes your customer success team’s vacation policy.

What happened? The retrieved documents contained irrelevant details, and the agent, lacking a filter, drifted away from the primary goal. It lost the thread because the noise was just as loud as the signal.

Without goal-aware filtering, agents treat every piece of information as equally important. A compliance footnote gets the same attention weight as the core data you actually need. The result? Context drift hallucinations that derail entire workflows—agents that need constant human supervision to stay on track.

Token Burn Rate Destroying Your Budget

Let’s do the math. Every irrelevant paragraph your agent processes costs tokens. If you’re running Claude Sonnet at $3 per million input tokens and your agent processes 500k tokens per complex task—but 300k of those tokens are junk—you’re paying $0.90 per task for literally nothing.

Scale that to 10,000 tasks per month. You’re burning $9,000 monthly on noise.

Larger context windows don’t solve the attention dilution problem. They can make it worse. More tokens in = higher costs + slower response times + more opportunities for the model to latch onto irrelevant information.

This is why understanding AI efficiency and cost control is critical before scaling your deployment.

Building a Digital RAS: The Three-Pillar Architecture

So how do we fix this? How do we give AI agents the equivalent of a biological RAS—a system that filters before processing, focuses on goals, and escalates when uncertain?

Here are the three pillars.

Pillar 1 — Semantic Routing (Filtering Before Retrieval)

Your biological RAS filters sensory input before it reaches your conscious mind. In AI architecture, we replicate this with semantic routers.

Instead of giving a worker agent access to every tool and every document index simultaneously, the semantic router analyzes the task first and routes it to the appropriate specialized subsystem.

Example: If the task is “Find compliance risks in this contract,” the router sends it to the legal knowledge base and compliance toolset—not the entire company wiki, not the HR policies, not the engineering docs.

Monitor and optimize your RAG pipeline’s context relevance scores. Poor retrieval is the #1 cause of hallucinations. Semantic routing ensures you’re retrieving from the right sources before you even hit the vector database.

This is selective awareness at the system level. Only relevant knowledge domains get activated.

Pillar 2 — Goal-Aware Attention Bias

Here’s where it gets interesting. Even with the right knowledge domain activated, you need to bias the agent’s attention toward the current goal.

In a Digital RAS architecture, a supervisory agent sets what researchers call “attentional bias.” If the goal is “Find compliance risks,” the supervisor biases retrieval and processing toward keywords like “risk,” “liability,” “regulatory,” and “compliance.”

When the worker agent pulls results from the vector database, the supervisor ensures it filters the RAG results based on the current goal. It forces the agent to discard high-ranking but contextually irrelevant chunks and focus only on what matters.

This transforms the agent from a passive reader into an active hunter of information. It’s no longer processing everything—it’s processing what it needs to complete the goal.

Pillar 3 — Confidence-Based Escalation

Your biological RAS knows when to wake you up. When it encounters something it can’t handle on autopilot—a strange noise at night, an unexpected pattern—it escalates to your conscious mind.

AI agents need the same mechanism.

In a well-designed system, agents track their own confidence scores. When uncertainty crosses a threshold—ambiguous input, conflicting data, edge cases outside training distribution—the agent escalates to human review instead of guessing.

When you don’t have enough information to answer accurately, say “I don’t have that specific information.” Never make up or guess at facts. This simple principle, hardcoded as a confidence threshold, prevents the majority of hallucination-driven failures.

The agent knows what it knows. More importantly, it knows what it doesn’t know—and asks for help.

Real-World Results: What Changes When You Filter Smart

This isn’t theoretical. Organizations implementing Digital RAS principles are seeing measurable improvements across the board.

40% Reduction in Hallucination Rates

Research shows knowledge-graph-based retrieval reduces hallucination rates by 40%. When you combine semantic routing with goal-aware filtering and structured knowledge graphs, you’re giving agents a map, not a pile of documents.

RAG-based context retrieval reduces hallucinations by 40–90% by anchoring responses in verified organizational data rather than relying on general training knowledge. The key word is verified. Filtered, relevant, goal-aligned data—not everything in the database.

60% Lower Token Costs

When your agent processes only what it needs, token consumption drops dramatically. In production deployments, teams report 50-70% reductions in input token costs after implementing semantic routing and attention bias.

You’re not paying to read junk mail anymore. You’re paying for signal.

Faster Response Times Without Sacrificing Accuracy

Smaller, focused context windows process faster. A model with a focused 10K token input may produce fewer hallucinations than a model with a 1M token window suffering from severe context rot, because there’s less noise competing for attention.

Speed and accuracy aren’t trade-offs when you filter smart. They move together.

How to Implement Digital RAS in Your Stack Today

You don’t need to rebuild your entire AI infrastructure overnight. Here’s where to start.

Start with Semantic Routers

Identify the 3-5 distinct knowledge domains your agents need to access. Legal, product, customer support, engineering, finance—whatever makes sense for your use case.

Build routing logic that analyzes the user query or task description and activates only the relevant domain. You can do this with simple keyword matching to start, then upgrade to learned routing as you scale.

The goal: stop giving agents access to everything. Start giving them access to the right thing.

Add Supervisory Agents for Goal Tracking

Implement a lightweight supervisor layer that tracks the agent’s current goal and biases retrieval accordingly. This can be as simple as dynamically adjusting vector search filters based on extracted goal keywords.

For more complex workflows, use a supervisor agent that maintains goal state across multi-step tasks and intervenes when the worker agent drifts. Learn more about implementing intelligent AI agent architectures that maintain focus across complex workflows.

Measure Signal-to-Noise Ratio

You can’t optimize what you don’t measure. Start tracking:

- Context relevance score — What percentage of retrieved chunks are actually relevant to the query?

- Token utilization rate — What percentage of input tokens contribute to the final output?

- Hallucination rate per task type — Track by use case, not aggregate

Context engineering is the practice of curating exactly the right information for an AI agent’s context window at each step of a task. It has replaced prompt engineering as the key discipline.

If your context relevance score is below 70%, you have a noise problem. Fix the filter before you scale the window.

Stop Chasing Bigger Windows. Start Building Smarter Filters.

The race to bigger context windows was always a distraction. The real question was never “How much can my AI see?”

The real question is: “What should my AI ignore?”

Your brain processes millions of inputs per second and stays focused because it has a biological filter—the RAS—that knows what matters and discards the rest. Your AI agents need the same thing.

Stop dumping everything into the context and hoping for the best. Stop paying to process junk. Start building systems that filter before they retrieve, focus on goals, and escalate when uncertain.

Because here’s the thing: the companies winning with AI right now aren’t the ones with the biggest models or the longest context windows. They’re the ones who figured out how to cut through the noise.

If you’re ready to stop wasting budget on irrelevant data and start building agents that actually stay on task, it’s time to rethink your architecture. Not bigger brains. Smarter filters.

That’s the difference between AI that impresses in demos and AI that delivers real ROI in production.

Read More

Ysquare Technology

09/04/2026

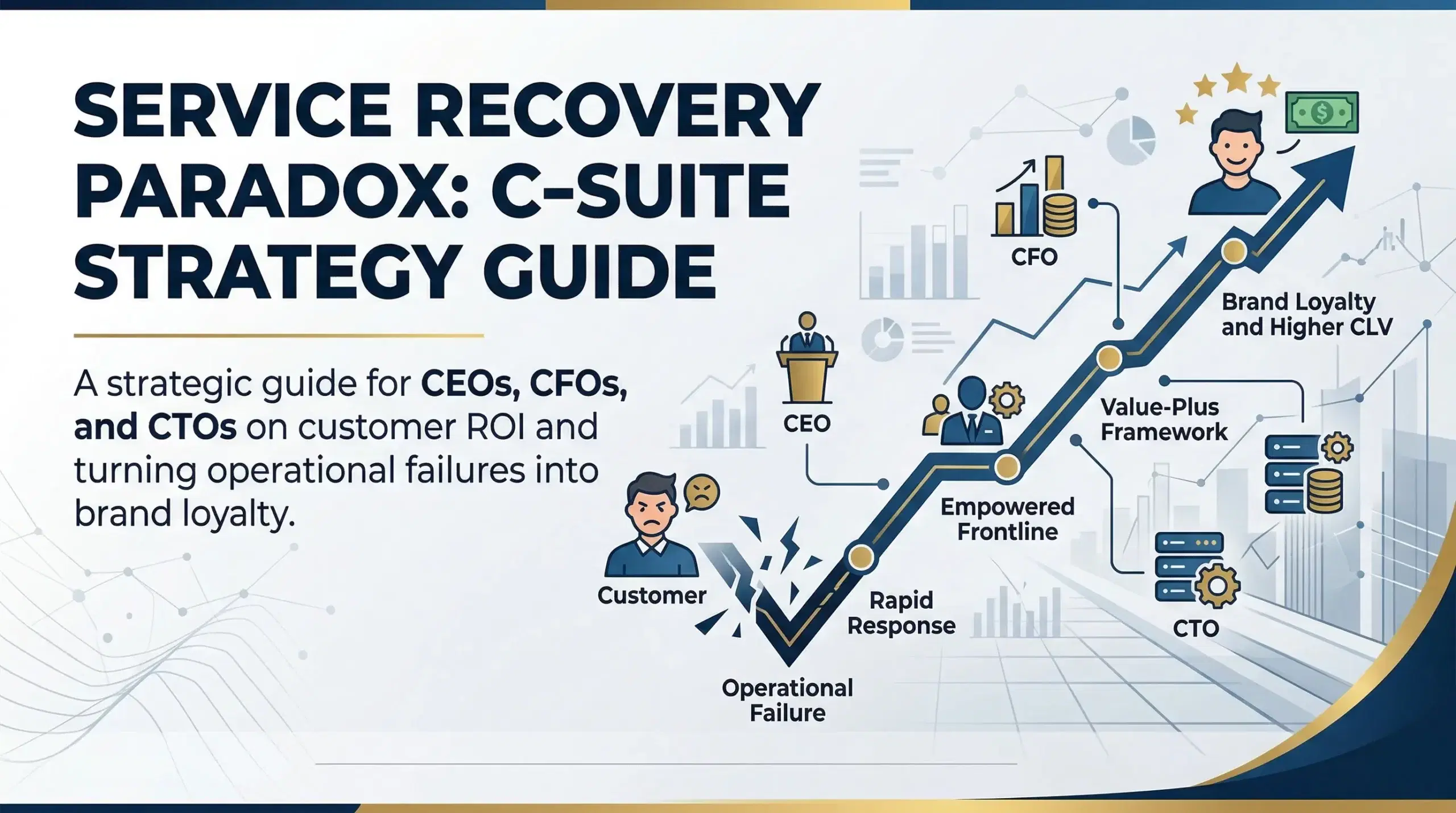

The Service Recovery Paradox: Why Your Worst Operational Failure is Your Greatest Strategic Asset

The modern enterprise ecosystem is hyper-competitive. Therefore, boardrooms demand absolute operational perfection. Shareholders expect flawless execution across all departments. Likewise, clients demand perfectly seamless user experiences. Furthermore, supply chains must run with clockwork precision. However, seasoned executives know the hard truth. A completely zero-defect operational environment is a mathematical impossibility.

Mistakes happen. For instance, cloud servers crash unexpectedly. Moreover, global events disrupt complex logistics networks. Similarly, human error remains an immutable variable across all workforces. Consequently, service failures are an inherent byproduct of scaling a business.

However, top leaders view these failures differently. The true differentiator of a legacy-building firm is not the total absence of errors. Instead, it is the strategic mastery of the Service Recovery Paradox (SRP).

Executives must manage service failures with precision, planning, and deep empathy. As a result, a service failure ceases to be a liability. Instead, it transforms into a high-yield business opportunity. You can engineer deep-seated brand loyalty through a mistake. Indeed, a flawless, routine transaction could never achieve this level of loyalty. This comprehensive guide breaks down the core elements of a successful Service Recovery Paradox business strategy. Thus, top management can turn inevitable mistakes into unmatched competitive advantages.

The Psychology and Anatomy of the Paradox

Leadership must fundamentally understand why the Service Recovery Paradox exists before deploying capital. Therefore, you must move beyond a simple “fix-it” mindset. You must understand behavioral economics and human psychology.

Defining the Expectancy Disconfirmation Paradigm

The Service Recovery Paradox is a unique behavioral phenomenon. Specifically, a customer’s post-failure satisfaction actually exceeds their previous loyalty levels. This only happens if the company handles the recovery with exceptional speed, empathy, and unexpected value.

This concept is rooted in the “Expectancy Disconfirmation Paradigm.” Clients sign contracts with your firm expecting a baseline level of competent service. Consequently, when you deliver that service flawlessly, you merely meet expectations. The client’s emotional state remains entirely neutral. After all, they got exactly what they paid for.

However, a service failure breaks this routine. Suddenly, the client becomes hyper-alert, frustrated, and emotionally engaged. You have violated their expectations. Obviously, this is a moment of extreme vulnerability for your brand. Yet, it is also a massive stage. Your company can step onto that stage and execute a heroic recovery. As a result, you completely disconfirm their negative expectations.

You prove your corporate character under intense pressure. Furthermore, this creates a massive emotional surge. It mitigates the client’s perception of future risk. Consequently, you cement a deep bond of operational resilience. To a middle manager, a failure looks like a red cell on a spreadsheet. In contrast, a visionary CEO sees the exact inflection point where a vendor transforms into an irreplaceable partner.

The CFO’s Ledger – The Financial ROI of Exceptional Customer Experience

Historically, corporate finance departments viewed customer service as a pure cost center. They heavily scrutinized refunds, discounts, and compensatory freebies as margin-eroding losses. However, forward-thinking CFOs must aggressively reframe this narrative. A robust Service Recovery Paradox business strategy acts as a highly effective churn mitigation strategy. Furthermore, it directly maximizes your Customer Lifetime Value (CLV).

1. The Retention vs. Acquisition Calculus

The cost of acquiring a new enterprise client continues to skyrocket year over year. Saturated ad markets and complex B2B sales cycles drive this increase. Therefore, a service failure instantly places a hard-won customer at a churn crossroads.

A mediocre or slow corporate response pushes them toward the exit. Consequently, you suffer the total loss of their projected Customer Lifetime Value. Conversely, a paradox-level recovery resets the CLV clock.

Consider an enterprise SaaS client paying $100,000 annually. First, a server outage costs them an hour of productivity. Offering a standard $11 refund does not fix their emotional frustration. In fact, it insults them. Instead, the CFO should pre-authorize a robust recovery budget. This allows the account manager to immediately offer a free month of a premium add-on feature. This software costs the company very little in marginal expenses. However, the client feels deeply valued. You prove your organization handles crises with generosity. Thus, you actively increase their openness to future upsells. Ultimately, you secure that $100,000 recurring revenue by spending a tiny fraction of acquisition costs.

2. Brand Equity Protection and Earned Media

We operate in an era of instant digital transparency. A single disgruntled B2B client can vent on LinkedIn. Similarly, a vocal consumer can create a viral video about a brand’s failure. As a result, they inflict massive, quantifiable damage on your corporate brand equity.

Conversely, a client experiencing the Service Recovery Paradox frequently becomes your most vocal brand advocate. They do not write reviews about software working perfectly. Instead, they write reviews about a CEO personally emailing them on a Sunday to fix a critical error. This positive earned media significantly reduces your required marketing spend. You establish trust with new prospects much faster. Therefore, your service recovery budget is a high-ROI marketing investment. It actively protects your reputation.

The CTO’s Architecture – Orchestrating Graceful Failures

The Service Recovery Paradox presents a complex technical design challenge for the CTO. In the past, IT focused solely on preventing downtime. Today, the mandate has evolved. CTOs must architect systems that recover with unprecedented speed, transparency, and grace.

1. Real-Time Observability and Automated Sentiment Detection

The psychological window for achieving the paradox closes rapidly. Usually, it closes before a client even submits a formal support ticket. Frustration sets in quickly. Therefore, modern technical leadership must prioritize advanced observability.

Your tech stack must utilize AI-driven sentiment analysis on user interactions. Furthermore, you must monitor API latency and deep system error logs. Your systems must detect user friction before the user feels the full impact. For instance, monitoring tools might flag a failed checkout process or a broken API endpoint. Consequently, the system should instantly alert your high-priority recovery team. Speed remains the ultimate anchor of the paradox. Recovering before the client realizes there is a problem is the holy grail of technical customer service.

2. Automating the Surprise and Delight Protocol

You cannot execute a genuine Service Recovery Paradox business strategy manually at a global enterprise scale. Therefore, CTOs should implement automated recovery engines. You must interconnect these engines with your CRM and billing systems.

A system might detect a major failure impacting a specific cohort of clients. Subsequently, it should automatically trigger a compensatory workflow. This could manifest as an instant, automated account credit. Alternatively, it could send a proactive push notification apologizing for the delay. You might even include a highly relevant discount code. Furthermore, an automated email from an executive alias can take full accountability. The technology itself initiates this proactive transparency. As a result, it triggers a profound psychological shift. The client feels seen and prioritized.

The CEO’s Mandate – Radically Empowering the Front Line

Institutional friction usually blocks the Service Recovery Paradox. A lack of good intentions is rarely the problem. A front-line customer success manager might require three levels of executive approval to offer a concession. Consequently, the emotional window for a win disappears entirely. Bureaucratic exhaustion replaces customer delight.

1. Radical Decentralization of Authority

Top management must aggressively dismantle rigid, script-based customer service bureaucracies. Instead, you must pivot toward outcome-based empowerment.

CEOs and CFOs must collaborate to establish a “Recovery Limit.” This represents a pre-approved financial threshold. Front-line agents can deploy this capital instantly to make a situation right. For example, you might authorize a $100 discretionary credit for retail consumers. Likewise, you might authorize a $10,000 service credit for enterprise account managers. Employees must have the authority to pull the trigger without asking permission. Speed is impossible without empowered employees.

2. The Value-Plus Framework Execution

A simple refund merely neutralizes the situation. It brings the client back to zero. Therefore, your teams must use the Value-Plus Framework to achieve the paradox.

-

First, Fix the Problem: You must resolve the original issue immediately. The plumbing must work before you offer champagne.

-

Second, Apologize Transparently: Say you are sorry without making corporate excuses. Clients do not care about your vendor issues. They care about their business.

-

Third, Add Real Value: This is the critical “Plus.” You must provide extra value that aligns seamlessly with the client’s goals. For instance, offer an extra month of a premium software tier. Alternatively, provide an unprompted upgrade to expedited overnight logistics.

HR and Operations – Building a Culture of Post-Mortem Excellence

Human Resources and Operations leaders must foster a specific corporate culture. Otherwise, the organization cannot consistently benefit from the Service Recovery Paradox. You must analyze operational failures scientifically rather than punishing them emotionally.

1. The Blame-Free Post-Mortem

Executive leadership must lead post-mortems focused entirely on systemic optimization after a major glitch. Sometimes, employees feel their jobs or bonuses are at risk for every mistake. Consequently, human nature dictates they will cover up failures. You cannot recover hidden failures. Thus, fear destroys any chance for a Service Recovery Paradox.

Management must visibly support winning back the customer as the primary goal. Consequently, the team acts with the speed and urgency required to trigger the psychological shift. You should never ask, “Who did this?” Instead, you must ask, “How did our system allow this, and how fast did we recover?”

2. Elevating Human Empathy in the AI Era

Artificial intelligence increasingly handles routine technical fixes and basic FAQ inquiries. Therefore, you must reserve human intervention exclusively for high-stakes, high-emotion service failures.

HR leadership must pivot corporate training budgets away from basic software operation. Instead, they must invest heavily in high-level Emotional Intelligence (EQ). Furthermore, teams need advanced conflict de-escalation and empathetic negotiation skills. A client does not want an automated chatbot when a multi-million dollar supply chain breaks. Rather, they want a highly trained, deeply empathetic human being. This person must validate their stress and take absolute ownership of the solution. Ultimately, the human touch turns a cold technical fix into a warm, loyalty-building psychological paradox.

The Reliability Trap – Recognizing the Limits of the Paradox

The Service Recovery Paradox remains a formidable, revenue-saving tool in your strategic arsenal. However, executive management must remain hyper-aware of the “Reliability Trap.”

The Danger of Double Deviation

You cannot build a sustainable, long-term business model on apologizing. The paradox has a strict statute of limitations. A customer might experience the exact same failure twice. Consequently, they no longer view the heroic recovery as exceptional. Instead, they view it as empirical evidence of consistent incompetence.

Operational psychologists call this a “Double Deviation.” The company fails at the core service and then fails the overarching trust test. This compounds the frustration and guarantees permanent, unrecoverable churn.

Furthermore, you should never intentionally orchestrate a service failure just to trigger the paradox. It acts as an emergency parachute, not a daily commuting vehicle. It relies heavily on a pre-existing trust reservoir. A brand-new startup with zero market reputation has no reservoir to draw from. Therefore, the client will simply leave. The paradox works best for established leaders. It aligns perfectly with the customer’s existing belief that your company is usually excellent.

The Bottom Line for the Boardroom

The Service Recovery Paradox serves as the ultimate stress test of an organization’s operational maturity. Furthermore, it tests leadership cohesion.

It requires a visionary CFO. This CFO must value long-term Customer Lifetime Value over short-term penny-pinching. Moreover, it requires a brilliant CTO. This technical leader must build self-healing, hyper-observant systems that catch friction instantly. Most importantly, it requires a strong CEO. The CEO must prioritize a culture of radical front-line accountability and psychological safety.

Every competitor promises fast, reliable, and seamless service in today’s commoditized global market. Therefore, the only way to be truly memorable is to handle a crisis vastly better than your peers.

You are simply fulfilling a contract when everything goes right. However, you receive a massive microphone when everything goes wrong. Stop fearing operational failure. Assume it will happen. Then, start aggressively perfecting your operational recovery. That is exactly where you protect the highest profit margins. Furthermore, that is where you forge the most fiercely loyal brand advocates.

Read More

Ysquare Technology

08/04/2026