Why AI Agents Fail Without Real-Time Data: The Infrastructure Gap

You’ve deployed AI agents. The demos looked impressive. The pilot went smoothly. Then you pushed to production and everything started breaking in ways you didn’t expect.

Sound familiar?

Here’s what most organizations discover too late: the difference between AI agents that work and AI agents that fail catastrophically isn’t about the model, the training data, or even the architecture. It’s about something far more fundamental—whether your agents can access current information when they need to make decisions.

Real-time data access for AI agents isn’t a luxury feature you add later. It’s the foundational infrastructure that determines whether autonomous systems can function reliably at all.

Most companies building AI agents today are essentially constructing sophisticated decision-making engines and then feeding them information that’s already outdated. They’re surprised when those agents make terrible decisions—but the failure was built in from the start.

Let’s talk about why this happens, what real-time data access actually means in practice, and what you need to build if you want AI agents that don’t just work in demos but actually deliver value in production.

Understanding Real-Time Data Access: What It Actually Means

Real-time data access means your AI agents can query and retrieve current information with minimal latency—typically milliseconds to seconds—rather than working from periodic batch updates that might be hours or days old.

This isn’t about making batch processing faster. It’s a fundamentally different approach to how data moves through your systems.

Traditional batch processing says: collect data throughout the day, process it in chunks during off-peak hours, and make updated datasets available periodically. Your morning report contains yesterday’s data. Your agent making a decision at 2 PM is working with information from last night’s batch job.

Streaming architectures say: treat every data change as an immediate event, process it the moment it occurs, and make it queryable within milliseconds. Your agent making a decision at 2 PM sees what’s happening at 2 PM.

For AI agents making autonomous decisions, that difference isn’t just about speed. It’s about whether the decision is based on reality or on a snapshot that no longer reflects the current state of your business.

According to research from CIO Magazine, modern fraud detection systems now correlate transactions with real-time device fingerprints and geolocation patterns to block fraud in milliseconds. The system can’t wait for the nightly batch update. By then, the fraudulent transaction has already settled and the money is gone.

The Hidden Cost of Stale Data in AI Agent Deployments

Here’s what makes stale data particularly dangerous for AI agents: the failure mode is silent.

When a traditional application encounters bad data, it often throws an error or crashes in obvious ways. You know something’s wrong because the system stops working.

AI agents don’t fail like that. They keep running. They keep making decisions. Those decisions just get progressively worse as the gap between their information and reality widens.

Research from Shelf found that outdated information leads to temporal drift, where AI agents generate responses based on obsolete knowledge. This is particularly critical for Retrieval-Augmented Generation (RAG) systems, where stale data produces incorrect recommendations that look authoritative because they’re well-formatted and delivered with confidence.

Think about what this means in a real business context:

Your customer service agent promises a shipping timeline based on inventory data from this morning. But there was a warehouse issue three hours ago that your logistics team resolved by redirecting shipments. The agent doesn’t know. It commits to dates you can’t meet. When documentation doesn’t reflect actual processes, agents make promises the business can’t keep.

Your pricing agent calculates a quote using rate tables that were updated yesterday, but your largest supplier announced a price increase this morning. Your quote is now below cost. You won’t know until the order processes and someone manually reviews the margin.

Your fraud detection system flags a legitimate high-value transaction from your best customer. Why? Because it’s comparing against behavior patterns that are six hours old. In those six hours, the customer landed in a different country for a business trip. The agent sees the transaction location, doesn’t see the updated travel status, and blocks the purchase.

None of these scenarios involve model failure. The AI is working exactly as designed. The infrastructure is the problem.

Why 88% of AI Agents Never Make It to Production

According to comprehensive analysis of agentic AI statistics, 88% of AI agents fail to reach production deployment. The 12% that succeed deliver an average ROI of 171% (192% in the US market).

What separates the winners from the failures?

Most organizations assume it’s about the sophistication of the model or the quality of the training data. Those factors matter, but they’re not the primary differentiator.

The real gap is infrastructure.

Deloitte’s 2025 Emerging Technology Trends study found that while 30% of organizations are exploring agentic AI and 38% are piloting solutions, only 14% have systems ready for deployment. The primary bottleneck cited? Data architecture.

Nearly half of organizations (48%) report that data searchability and reusability are their top barriers to AI automation. That’s code for: “our data infrastructure can’t support what these agents need to do.”

Organizations with scattered knowledge across multiple systems face compounded challenges—when agents can’t find authoritative, current information, they either make decisions with incomplete data or become paralyzed by conflicting sources.

Here’s the pattern that plays out repeatedly:

Pilot phase: Controlled environment, limited data sources, manageable complexity. The agent works because you’ve carefully curated its information access.

Production deployment: Real-world complexity, dozens of data sources, conflicting information, latency issues, and stale data scattered across systems. The agent that worked perfectly in the pilot now makes unreliable decisions because the infrastructure can’t deliver current, consistent information at scale.

The companies that close this gap are the ones investing in boring infrastructure: Change Data Capture (CDC) pipelines, streaming platforms, semantic layers, and data freshness monitoring. Not sexy. Absolutely critical.

The Real-Time Data Infrastructure Stack for AI Agents

If you’re serious about deploying AI agents that work in production, here’s what the infrastructure stack actually looks like:

Source Systems with CDC Pipelines

Your databases, CRMs, ERPs, and operational systems need Change Data Capture enabled. Every insert, update, and delete gets captured as an event the moment it happens. Tools like Debezium, Streamkap, or AWS DMS handle this layer.

Streaming Platform

Those events flow into a streaming platform—Apache Kafka, Apache Pulsar, AWS Kinesis, or Google Cloud Pub/Sub. This is your real-time data backbone. Events are processed immediately and made available to consumers within milliseconds.

According to the 2026 Data Streaming Landscape analysis, 90% of IT leaders are increasing their investments in data streaming infrastructure specifically to support AI agents. Market research suggests 80% of AI applications will use streaming data by 2026.

Semantic Layer

Raw data isn’t enough. AI agents need context. A semantic layer sits on top of your streaming data to provide business definitions, relationship mappings, and data quality rules. This layer answers questions like “what does ‘active customer’ actually mean?” and “which revenue figure is the source of truth?”

Data Freshness Monitoring

You need systems that continuously track when data was last updated and alert you when freshness degrades. This isn’t traditional uptime monitoring—it’s monitoring whether the data your agents are accessing is still current enough to support reliable decisions.

Agent Query Layer

Finally, your AI agents need an optimized query interface that lets them access both current state and historical context with minimal latency. This might be a high-performance database like Aerospike, a data lakehouse like Databricks, or a specialized vector database for RAG applications.

Research from Aerospike emphasizes that organizations must invest in a data backbone delivering both ultra-low latency and massive scalability. AI agents thrive on fast, fresh data streams—the need for accurate, comprehensive, real-time data that scales cannot be overstated.

What Happens When You Skip the Infrastructure Investment

Let’s be direct: you can’t retrofit real-time data access onto batch-based architectures and expect it to work reliably.

The companies trying this approach encounter predictable failure patterns:

Race Conditions: Agent A makes a decision based on data snapshot 1. Agent B makes a conflicting decision based on snapshot 2. Neither knows about the other’s action because the data layer doesn’t synchronize in real time.

Context Staleness: According to analysis of AI context failures, agents frequently have access to both current and outdated information but default to the stale version because it ranked higher in similarity search or was cached more aggressively.

Orchestration Drift: Research from InfoWorld found that agent-related production incidents dropped 71% after deploying event-based coordination infrastructure. Most eliminated incidents were race conditions and stale context bugs that are structurally impossible with proper real-time architecture.

Silent Degradation: The system doesn’t fail obviously. It just makes worse decisions over time as data freshness degrades. By the time you notice the problem, you’ve already made hundreds or thousands of bad decisions.

Here’s a real example from production failure analysis: a sales agent connected to Confluence and Salesforce worked perfectly in demos. In production, it offered a major customer a 50% discount nobody authorized. The root cause? An outdated pricing document in Confluence still referenced a promotional rate from two quarters ago. The agent treated it as current because nothing in the infrastructure flagged it as stale.

The documentation-reality gap isn’t just an accuracy problem—it’s a trust-destruction mechanism that makes AI agents unreliable at scale.

The Economics of Real-Time: When Does It Actually Pay Off?

Real-time data infrastructure isn’t cheap. Streaming platforms, CDC pipelines, semantic layers, and monitoring systems require investment in technology, engineering time, and operational overhead.

So when does it actually make economic sense?

Cloud-native data pipeline deployments are delivering 3.7× ROI on average according to Alation’s 2026 analysis, with the clearest gains in fraud detection, predictive maintenance, and real-time customer personalization.

The ROI calculation comes down to three factors:

Decision Velocity: How quickly do conditions change in your business? If you’re in e-commerce, financial services, logistics, or healthcare, conditions change by the minute. Batch processing means your agents are always operating with outdated information. The cost of wrong decisions based on stale data exceeds the infrastructure investment.

Decision Consequence: What’s the cost of a single wrong decision? In fraud detection, one missed fraudulent transaction can cost thousands of dollars. In healthcare, one outdated patient data point can have life-threatening consequences. High-consequence decisions justify real-time infrastructure.

Scale of Automation: How many autonomous decisions are your agents making per day? If it’s dozens, batch processing might be adequate. If it’s thousands or millions, the aggregate cost of decision errors from stale data quickly outweighs infrastructure costs.

According to comprehensive statistics on agentic AI adoption, the global AI agents market is projected to grow from $7.63 billion in 2025 to $182.97 billion by 2033—a 49.6% compound annual growth rate. That explosive growth is happening because organizations are discovering that agents with proper data infrastructure actually deliver value.

Building Real-Time Capability: A Practical Roadmap

If you’re starting from batch-based infrastructure and need to support AI agents with real-time data access, here’s a practical migration path:

Phase 1: Identify Critical Data Sources

Not all data needs real-time access. Start by identifying which data sources your AI agents actually query for autonomous decisions. Customer data? Inventory? Pricing? Transaction history? Map the data flows and prioritize based on decision frequency and consequence.

Phase 2: Implement CDC on High-Priority Sources

Enable Change Data Capture on your most critical databases. This captures every change as it happens and streams it to your data platform. Start with one or two sources, validate that the pipeline works reliably, then expand.

Phase 3: Deploy Streaming Infrastructure

Stand up your streaming platform—whether that’s Kafka, Pulsar, Kinesis, or another solution depends on your cloud strategy and technical requirements. Configure it for high availability and monitoring from day one.

Phase 4: Build the Semantic Layer

This is where many organizations stumble. Raw event streams aren’t enough—you need business context. Invest in data catalog tools, governance frameworks, and automated metadata management. Organizations struggling with scattered knowledge across systems need this layer to provide agents with authoritative, consistent definitions.

Phase 5: Implement Freshness Monitoring

Deploy monitoring systems that track data age and alert when freshness degrades below acceptable thresholds. This is your early warning system for infrastructure problems that would otherwise manifest as agent decision errors.

Phase 6: Migrate Agent Queries

Gradually migrate your AI agents from batch data queries to real-time streams. Do this incrementally, validating that decision quality improves before moving to the next agent or use case.

The timeline for this migration typically ranges from 3-9 months depending on your starting point and organizational complexity. The companies succeeding with AI agents built this infrastructure before deploying agents widely—not after pilots failed in production.

The Questions Your Leadership Team Should Be Asking

If you’re presenting AI agent initiatives to executives or board members, here are the infrastructure questions they should be asking (and you should be prepared to answer):

How fresh is the data our agents are accessing? If the answer is “it varies” or “I’m not sure,” that’s a red flag. Data freshness should be measurable, monitored, and consistent.

What happens when data sources conflict? Multiple systems often contain different versions of the same information. Which source is authoritative? How do agents know which to trust? If you don’t have clear answers, agents will make arbitrary choices.

Can we trace agent decisions back to the data that informed them? For regulatory compliance, debugging, and trust-building, you need data lineage. Every agent decision should be traceable to specific data sources with timestamps.

What’s our plan for scaling this infrastructure? Real-time data platforms need to handle increasing volumes as you deploy more agents and integrate more data sources. What’s your scaling strategy?

How do we know when data goes stale? Monitoring uptime isn’t enough. You need monitoring that tracks data age and alerts when freshness degrades before it impacts decision quality.

According to analysis from MIT Technology Review, in late 2025 nearly two-thirds of companies were experimenting with AI agents, while 88% were using AI in at least one business function. Yet only one in 10 companies actually scaled their agents. The infrastructure gap is the primary reason.

Real-Time Data Access: The Competitive Moat You’re Building

Here’s the strategic insight most organizations miss: real-time data infrastructure for AI agents isn’t just an operational necessity. It’s a competitive moat.

The companies investing in this infrastructure now are building capabilities their competitors can’t easily replicate. Streaming data platforms, semantic layers, and data freshness monitoring create compound advantages:

Faster Time to Value: Once the infrastructure exists, deploying new AI agents becomes dramatically faster because the hard part—reliable data access—is already solved.

Higher Quality Decisions: Agents making decisions on current data consistently outperform agents working with stale information. That quality difference compounds over thousands of decisions daily.

Organizational Learning: Real-time infrastructure enables feedback loops that make agents smarter over time. Batch-based systems can’t close these loops fast enough to drive continuous improvement.

Regulatory Confidence: In industries with strict compliance requirements, being able to demonstrate that agent decisions are based on current, traceable data creates regulatory confidence that competitors lacking this capability can’t match.

Research indicates that AI-driven traffic grew 187% from January to December 2025, while traffic from AI agents and agentic browsers grew 7,851% year over year. The organizations capturing value from this explosion are the ones with infrastructure that supports reliable, real-time autonomous operations.

The Bottom Line on Real-Time Data for AI Agents

Real-time data access isn’t a feature. It’s the foundation.

If you’re deploying AI agents on batch-processed data, you’re deploying agents that will make outdated decisions. Some percentage of those decisions will be wrong. The only questions are: what percentage, and what will those mistakes cost?

The uncomfortable truth is that most AI agent failures aren’t model problems—they’re infrastructure problems. Organizations keep chasing better models while ignoring the data architecture that determines whether those models can function reliably.

According to comprehensive research on AI agent production failures, 27% of failures trace directly to data quality and freshness issues—not model design or harness architecture. The agents that succeed are the ones with infrastructure that delivers current, consistent, contextualized data at the moment of decision.

The companies winning with AI agents in 2026 are the ones that invested in streaming platforms, CDC pipelines, semantic layers, and freshness monitoring before deploying agents broadly. The companies still struggling are the ones trying to retrofit real-time capabilities onto batch architectures after pilots failed.

Which category does your organization fall into?

If you’re not sure, read our detailed analysis on real-time data access for AI agents for a deeper dive into the infrastructure decisions that determine whether AI agents work or fail at scale.

The window for building this as a competitive advantage is closing. Soon it will just be table stakes. The question is whether you’re building it now or explaining to your board later why your AI agents couldn’t deliver the promised value.

Frequently Asked Questions

1. What exactly is real-time data access for AI agents?

Real-time data access means AI agents can query and retrieve current information with minimal latency—typically milliseconds to seconds instead of relying on periodic batch updates that might be hours or days old. This enables agents to make decisions based on the actual current state of systems, customers, inventory, or transactions rather than historical snapshots that no longer reflect reality.

2. Why can't AI agents work effectively with batch-processed data?

AI agents treat the data they receive as ground truth and make autonomous decisions based on that information. Unlike humans who can interpolate and apply judgment to outdated data, agents can't reliably determine when information is stale or adjust their decisions accordingly. When batch-processed data introduces hours or days of latency, agents systematically make decisions based on conditions that have already changed, leading to errors that compound at scale.

3. What are the most common failure modes when AI agents use stale data?

Common failure modes include temporal drift (agents acting on obsolete knowledge), context staleness (defaulting to older cached data when fresher data exists), race conditions (multiple agents making conflicting decisions due to unsynchronized data), and silent degradation (decision quality eroding gradually without obvious system failures). Research shows these failures often go undetected for weeks because agents don't crash—they just make progressively worse decisions.

4. How much does real-time data infrastructure actually cost?

Infrastructure costs vary widely based on data volume, number of sources, and architecture complexity, but organizations report 3.7× average ROI from cloud-native data pipeline deployments. The investment includes streaming platforms (Kafka, Pulsar, Kinesis), CDC pipeline tools, semantic layer development, and monitoring systems. However, the cost of not having this infrastructure—in failed deployments, wrong decisions, and lost competitive advantage—typically far exceeds the infrastructure investment for high-velocity, high-consequence decision environments.

5. Can we add real-time data access to our existing AI agents, or do we need to rebuild?

Retrofitting real-time capabilities onto batch-based architectures rarely works reliably. The companies succeeding with AI agents built streaming data infrastructure first and deployed agents second. Attempting to bolt real-time access onto existing batch pipelines typically creates race conditions, inconsistent state, and reliability problems. A phased migration approach—implementing CDC on critical sources, deploying streaming infrastructure, building semantic layers, then gradually migrating agent queries—is more practical than trying to run both architectures simultaneously.

6. What's the difference between streaming data and just running batch jobs more frequently?

Streaming data treats each change as an immediate event that's processed and made queryable in milliseconds. Batch processing, even at high frequency (every 15 minutes, for example), still introduces systematic latency between when something happens and when agents can see it. For fraud detection, supply chain optimization, or customer service scenarios, that latency window is where problems occur. Streaming architecture also enables event-driven decision-making—agents can react to changes as they happen rather than polling for updates.

7. How do we measure whether our data is fresh enough for AI agents?

Implement data freshness monitoring that tracks when each data source was last updated and alerts when age exceeds defined thresholds. For critical decision data, freshness should be measured in seconds or minutes, not hours. Track metrics like: time since last update, percentage of queries served from stale data, decision errors attributable to data latency, and average data age at decision time. These metrics should be continuously monitored and alerting on degradation before it impacts agent reliability.

8. Which AI agent use cases absolutely require real-time data access?

Any use case where conditions change frequently and decisions have meaningful consequences requires real-time data: fraud detection (transaction patterns change by the second), customer service (account status, order tracking, inventory availability), supply chain optimization (demand signals, inventory levels, logistics disruptions), pricing engines (market conditions, competitor pricing, cost fluctuations), and healthcare (patient monitoring, treatment recommendations, clinical alerts). If decisions become wrong or obsolete within minutes to hours, real-time infrastructure is non-negotiable.

9. How does real-time data access affect AI agent accuracy and reliability?

Research shows that 27% of AI agent production failures trace to data quality and freshness issues. Agents with real-time data access make decisions based on current conditions rather than historical assumptions, dramatically reducing errors from temporal drift. Organizations report 71% reductions in production incidents after deploying event-based coordination infrastructure. The impact isn't just fewer errors—it's faster feedback loops that enable continuous improvement as agents learn from outcomes in near-real-time.

10. What are the first steps for organizations wanting to implement real-time data for AI agents?

Start with a data access audit: identify which sources your AI agents query for autonomous decisions, map current data freshness for each source, and quantify the cost of decision errors from stale data. Prioritize high-frequency, high-consequence decision data for real-time migration. Implement CDC on 1-2 critical sources as a proof of concept, deploy streaming infrastructure with monitoring from day one, and validate improved decision quality before expanding. Most importantly, build the semantic layer alongside streaming infrastructure—raw real-time data without business context doesn't solve the problem.

Why AI Agents Fail Without Real-Time Data: The Infrastructure Gap

You’ve deployed AI agents. The demos looked impressive. The pilot went smoothly. Then you pushed to production and everything started breaking in ways you didn’t expect.

Sound familiar?

Here’s what most organizations discover too late: the difference between AI agents that work and AI agents that fail catastrophically isn’t about the model, the training data, or even the architecture. It’s about something far more fundamental—whether your agents can access current information when they need to make decisions.

Real-time data access for AI agents isn’t a luxury feature you add later. It’s the foundational infrastructure that determines whether autonomous systems can function reliably at all.

Most companies building AI agents today are essentially constructing sophisticated decision-making engines and then feeding them information that’s already outdated. They’re surprised when those agents make terrible decisions—but the failure was built in from the start.

Let’s talk about why this happens, what real-time data access actually means in practice, and what you need to build if you want AI agents that don’t just work in demos but actually deliver value in production.

Understanding Real-Time Data Access: What It Actually Means

Real-time data access means your AI agents can query and retrieve current information with minimal latency—typically milliseconds to seconds—rather than working from periodic batch updates that might be hours or days old.

This isn’t about making batch processing faster. It’s a fundamentally different approach to how data moves through your systems.

Traditional batch processing says: collect data throughout the day, process it in chunks during off-peak hours, and make updated datasets available periodically. Your morning report contains yesterday’s data. Your agent making a decision at 2 PM is working with information from last night’s batch job.

Streaming architectures say: treat every data change as an immediate event, process it the moment it occurs, and make it queryable within milliseconds. Your agent making a decision at 2 PM sees what’s happening at 2 PM.

For AI agents making autonomous decisions, that difference isn’t just about speed. It’s about whether the decision is based on reality or on a snapshot that no longer reflects the current state of your business.

According to research from CIO Magazine, modern fraud detection systems now correlate transactions with real-time device fingerprints and geolocation patterns to block fraud in milliseconds. The system can’t wait for the nightly batch update. By then, the fraudulent transaction has already settled and the money is gone.

The Hidden Cost of Stale Data in AI Agent Deployments

Here’s what makes stale data particularly dangerous for AI agents: the failure mode is silent.

When a traditional application encounters bad data, it often throws an error or crashes in obvious ways. You know something’s wrong because the system stops working.

AI agents don’t fail like that. They keep running. They keep making decisions. Those decisions just get progressively worse as the gap between their information and reality widens.

Research from Shelf found that outdated information leads to temporal drift, where AI agents generate responses based on obsolete knowledge. This is particularly critical for Retrieval-Augmented Generation (RAG) systems, where stale data produces incorrect recommendations that look authoritative because they’re well-formatted and delivered with confidence.

Think about what this means in a real business context:

Your customer service agent promises a shipping timeline based on inventory data from this morning. But there was a warehouse issue three hours ago that your logistics team resolved by redirecting shipments. The agent doesn’t know. It commits to dates you can’t meet. When documentation doesn’t reflect actual processes, agents make promises the business can’t keep.

Your pricing agent calculates a quote using rate tables that were updated yesterday, but your largest supplier announced a price increase this morning. Your quote is now below cost. You won’t know until the order processes and someone manually reviews the margin.

Your fraud detection system flags a legitimate high-value transaction from your best customer. Why? Because it’s comparing against behavior patterns that are six hours old. In those six hours, the customer landed in a different country for a business trip. The agent sees the transaction location, doesn’t see the updated travel status, and blocks the purchase.

None of these scenarios involve model failure. The AI is working exactly as designed. The infrastructure is the problem.

Why 88% of AI Agents Never Make It to Production

According to comprehensive analysis of agentic AI statistics, 88% of AI agents fail to reach production deployment. The 12% that succeed deliver an average ROI of 171% (192% in the US market).

What separates the winners from the failures?

Most organizations assume it’s about the sophistication of the model or the quality of the training data. Those factors matter, but they’re not the primary differentiator.

The real gap is infrastructure.

Deloitte’s 2025 Emerging Technology Trends study found that while 30% of organizations are exploring agentic AI and 38% are piloting solutions, only 14% have systems ready for deployment. The primary bottleneck cited? Data architecture.

Nearly half of organizations (48%) report that data searchability and reusability are their top barriers to AI automation. That’s code for: “our data infrastructure can’t support what these agents need to do.”

Organizations with scattered knowledge across multiple systems face compounded challenges—when agents can’t find authoritative, current information, they either make decisions with incomplete data or become paralyzed by conflicting sources.

Here’s the pattern that plays out repeatedly:

Pilot phase: Controlled environment, limited data sources, manageable complexity. The agent works because you’ve carefully curated its information access.

Production deployment: Real-world complexity, dozens of data sources, conflicting information, latency issues, and stale data scattered across systems. The agent that worked perfectly in the pilot now makes unreliable decisions because the infrastructure can’t deliver current, consistent information at scale.

The companies that close this gap are the ones investing in boring infrastructure: Change Data Capture (CDC) pipelines, streaming platforms, semantic layers, and data freshness monitoring. Not sexy. Absolutely critical.

The Real-Time Data Infrastructure Stack for AI Agents

If you’re serious about deploying AI agents that work in production, here’s what the infrastructure stack actually looks like:

Source Systems with CDC Pipelines

Your databases, CRMs, ERPs, and operational systems need Change Data Capture enabled. Every insert, update, and delete gets captured as an event the moment it happens. Tools like Debezium, Streamkap, or AWS DMS handle this layer.

Streaming Platform

Those events flow into a streaming platform—Apache Kafka, Apache Pulsar, AWS Kinesis, or Google Cloud Pub/Sub. This is your real-time data backbone. Events are processed immediately and made available to consumers within milliseconds.

According to the 2026 Data Streaming Landscape analysis, 90% of IT leaders are increasing their investments in data streaming infrastructure specifically to support AI agents. Market research suggests 80% of AI applications will use streaming data by 2026.

Semantic Layer

Raw data isn’t enough. AI agents need context. A semantic layer sits on top of your streaming data to provide business definitions, relationship mappings, and data quality rules. This layer answers questions like “what does ‘active customer’ actually mean?” and “which revenue figure is the source of truth?”

Data Freshness Monitoring

You need systems that continuously track when data was last updated and alert you when freshness degrades. This isn’t traditional uptime monitoring—it’s monitoring whether the data your agents are accessing is still current enough to support reliable decisions.

Agent Query Layer

Finally, your AI agents need an optimized query interface that lets them access both current state and historical context with minimal latency. This might be a high-performance database like Aerospike, a data lakehouse like Databricks, or a specialized vector database for RAG applications.

Research from Aerospike emphasizes that organizations must invest in a data backbone delivering both ultra-low latency and massive scalability. AI agents thrive on fast, fresh data streams—the need for accurate, comprehensive, real-time data that scales cannot be overstated.

What Happens When You Skip the Infrastructure Investment

Let’s be direct: you can’t retrofit real-time data access onto batch-based architectures and expect it to work reliably.

The companies trying this approach encounter predictable failure patterns:

Race Conditions: Agent A makes a decision based on data snapshot 1. Agent B makes a conflicting decision based on snapshot 2. Neither knows about the other’s action because the data layer doesn’t synchronize in real time.

Context Staleness: According to analysis of AI context failures, agents frequently have access to both current and outdated information but default to the stale version because it ranked higher in similarity search or was cached more aggressively.

Orchestration Drift: Research from InfoWorld found that agent-related production incidents dropped 71% after deploying event-based coordination infrastructure. Most eliminated incidents were race conditions and stale context bugs that are structurally impossible with proper real-time architecture.

Silent Degradation: The system doesn’t fail obviously. It just makes worse decisions over time as data freshness degrades. By the time you notice the problem, you’ve already made hundreds or thousands of bad decisions.

Here’s a real example from production failure analysis: a sales agent connected to Confluence and Salesforce worked perfectly in demos. In production, it offered a major customer a 50% discount nobody authorized. The root cause? An outdated pricing document in Confluence still referenced a promotional rate from two quarters ago. The agent treated it as current because nothing in the infrastructure flagged it as stale.

The documentation-reality gap isn’t just an accuracy problem—it’s a trust-destruction mechanism that makes AI agents unreliable at scale.

The Economics of Real-Time: When Does It Actually Pay Off?

Real-time data infrastructure isn’t cheap. Streaming platforms, CDC pipelines, semantic layers, and monitoring systems require investment in technology, engineering time, and operational overhead.

So when does it actually make economic sense?

Cloud-native data pipeline deployments are delivering 3.7× ROI on average according to Alation’s 2026 analysis, with the clearest gains in fraud detection, predictive maintenance, and real-time customer personalization.

The ROI calculation comes down to three factors:

Decision Velocity: How quickly do conditions change in your business? If you’re in e-commerce, financial services, logistics, or healthcare, conditions change by the minute. Batch processing means your agents are always operating with outdated information. The cost of wrong decisions based on stale data exceeds the infrastructure investment.

Decision Consequence: What’s the cost of a single wrong decision? In fraud detection, one missed fraudulent transaction can cost thousands of dollars. In healthcare, one outdated patient data point can have life-threatening consequences. High-consequence decisions justify real-time infrastructure.

Scale of Automation: How many autonomous decisions are your agents making per day? If it’s dozens, batch processing might be adequate. If it’s thousands or millions, the aggregate cost of decision errors from stale data quickly outweighs infrastructure costs.

According to comprehensive statistics on agentic AI adoption, the global AI agents market is projected to grow from $7.63 billion in 2025 to $182.97 billion by 2033—a 49.6% compound annual growth rate. That explosive growth is happening because organizations are discovering that agents with proper data infrastructure actually deliver value.

Building Real-Time Capability: A Practical Roadmap

If you’re starting from batch-based infrastructure and need to support AI agents with real-time data access, here’s a practical migration path:

Phase 1: Identify Critical Data Sources

Not all data needs real-time access. Start by identifying which data sources your AI agents actually query for autonomous decisions. Customer data? Inventory? Pricing? Transaction history? Map the data flows and prioritize based on decision frequency and consequence.

Phase 2: Implement CDC on High-Priority Sources

Enable Change Data Capture on your most critical databases. This captures every change as it happens and streams it to your data platform. Start with one or two sources, validate that the pipeline works reliably, then expand.

Phase 3: Deploy Streaming Infrastructure

Stand up your streaming platform—whether that’s Kafka, Pulsar, Kinesis, or another solution depends on your cloud strategy and technical requirements. Configure it for high availability and monitoring from day one.

Phase 4: Build the Semantic Layer

This is where many organizations stumble. Raw event streams aren’t enough—you need business context. Invest in data catalog tools, governance frameworks, and automated metadata management. Organizations struggling with scattered knowledge across systems need this layer to provide agents with authoritative, consistent definitions.

Phase 5: Implement Freshness Monitoring

Deploy monitoring systems that track data age and alert when freshness degrades below acceptable thresholds. This is your early warning system for infrastructure problems that would otherwise manifest as agent decision errors.

Phase 6: Migrate Agent Queries

Gradually migrate your AI agents from batch data queries to real-time streams. Do this incrementally, validating that decision quality improves before moving to the next agent or use case.

The timeline for this migration typically ranges from 3-9 months depending on your starting point and organizational complexity. The companies succeeding with AI agents built this infrastructure before deploying agents widely—not after pilots failed in production.

The Questions Your Leadership Team Should Be Asking

If you’re presenting AI agent initiatives to executives or board members, here are the infrastructure questions they should be asking (and you should be prepared to answer):

How fresh is the data our agents are accessing? If the answer is “it varies” or “I’m not sure,” that’s a red flag. Data freshness should be measurable, monitored, and consistent.

What happens when data sources conflict? Multiple systems often contain different versions of the same information. Which source is authoritative? How do agents know which to trust? If you don’t have clear answers, agents will make arbitrary choices.

Can we trace agent decisions back to the data that informed them? For regulatory compliance, debugging, and trust-building, you need data lineage. Every agent decision should be traceable to specific data sources with timestamps.

What’s our plan for scaling this infrastructure? Real-time data platforms need to handle increasing volumes as you deploy more agents and integrate more data sources. What’s your scaling strategy?

How do we know when data goes stale? Monitoring uptime isn’t enough. You need monitoring that tracks data age and alerts when freshness degrades before it impacts decision quality.

According to analysis from MIT Technology Review, in late 2025 nearly two-thirds of companies were experimenting with AI agents, while 88% were using AI in at least one business function. Yet only one in 10 companies actually scaled their agents. The infrastructure gap is the primary reason.

Real-Time Data Access: The Competitive Moat You’re Building

Here’s the strategic insight most organizations miss: real-time data infrastructure for AI agents isn’t just an operational necessity. It’s a competitive moat.

The companies investing in this infrastructure now are building capabilities their competitors can’t easily replicate. Streaming data platforms, semantic layers, and data freshness monitoring create compound advantages:

Faster Time to Value: Once the infrastructure exists, deploying new AI agents becomes dramatically faster because the hard part—reliable data access—is already solved.

Higher Quality Decisions: Agents making decisions on current data consistently outperform agents working with stale information. That quality difference compounds over thousands of decisions daily.

Organizational Learning: Real-time infrastructure enables feedback loops that make agents smarter over time. Batch-based systems can’t close these loops fast enough to drive continuous improvement.

Regulatory Confidence: In industries with strict compliance requirements, being able to demonstrate that agent decisions are based on current, traceable data creates regulatory confidence that competitors lacking this capability can’t match.

Research indicates that AI-driven traffic grew 187% from January to December 2025, while traffic from AI agents and agentic browsers grew 7,851% year over year. The organizations capturing value from this explosion are the ones with infrastructure that supports reliable, real-time autonomous operations.

The Bottom Line on Real-Time Data for AI Agents

Real-time data access isn’t a feature. It’s the foundation.

If you’re deploying AI agents on batch-processed data, you’re deploying agents that will make outdated decisions. Some percentage of those decisions will be wrong. The only questions are: what percentage, and what will those mistakes cost?

The uncomfortable truth is that most AI agent failures aren’t model problems—they’re infrastructure problems. Organizations keep chasing better models while ignoring the data architecture that determines whether those models can function reliably.

According to comprehensive research on AI agent production failures, 27% of failures trace directly to data quality and freshness issues—not model design or harness architecture. The agents that succeed are the ones with infrastructure that delivers current, consistent, contextualized data at the moment of decision.

The companies winning with AI agents in 2026 are the ones that invested in streaming platforms, CDC pipelines, semantic layers, and freshness monitoring before deploying agents broadly. The companies still struggling are the ones trying to retrofit real-time capabilities onto batch architectures after pilots failed.

Which category does your organization fall into?

If you’re not sure, read our detailed analysis on real-time data access for AI agents for a deeper dive into the infrastructure decisions that determine whether AI agents work or fail at scale.

The window for building this as a competitive advantage is closing. Soon it will just be table stakes. The question is whether you’re building it now or explaining to your board later why your AI agents couldn’t deliver the promised value.

Read More

Ysquare Technology

20/04/2026

AI Agent Documentation Gap: Why Most Implementations Fail

Let’s be honest you can’t teach an AI agent to do work that nobody can explain clearly. And that’s the exact trap most organizations walk into when deploying AI agents.

The promise sounds incredible: autonomous agents handling customer inquiries, processing approvals, managing workflows all while you sleep. But here’s the catch nobody mentions in the sales pitch: AI agents are only as good as the documentation they’re trained on. And in most enterprises, that documentation was written by humans, for humans, years ago and it hasn’t kept up with how work actually gets done today.

This is the documentation reality gap. Your official process says one thing. Your team does something completely different. And when you hand those outdated documents to an AI agent and tell it to “just follow the process,” you’re not automating efficiency. You’re scaling chaos.

The Documentation Crisis Nobody Wants to Talk About

Process documentation in most enterprises is in terrible shape. Not because anyone intended it that way but because documentation is treated as a compliance checkbox, not a living operational asset.

According to recent research, only 16% of organizations report having extremely well-documented workflows. That means 84% of companies are trying to deploy AI agents on shaky foundations. Even more telling: 49% of organizations admit that undocumented or ad-hoc processes impact their efficiency regularly.

Think about that for a second. Half of all businesses know their processes aren’t properly documented yet they’re still attempting to hand those same processes to autonomous AI systems and expecting success.

The numbers tell the brutal truth: between 80% and 95% of enterprise AI projects fail to deliver meaningful ROI. And while there are multiple reasons for failure, documentation mismatch sits at the core of most disasters.

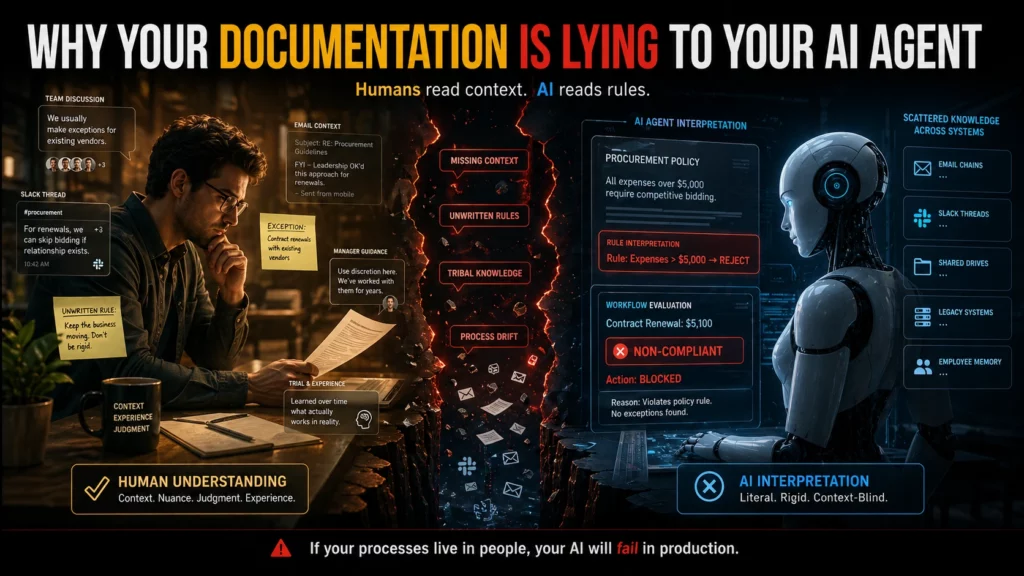

Why Your Documentation Is Lying to Your AI Agent

Here’s what most people don’t realize: your company’s documentation wasn’t designed to be machine-readable. It was written by someone who understood the context, the history, the unwritten rules, and the exceptions that “everyone just knows.”

An employee reading your procurement policy understands that when it says “expenses over $5,000 require competitive bidding,” there’s an implicit exception for contract renewals with existing vendors. They know this because someone told them during onboarding, or they watched how their manager handled it, or they learned it through trial and error.

An AI agent reading that same policy? It sees an absolute rule. No exceptions. So when a $5,100 contract renewal comes through, the agent flags it as non-compliant — blocking a routine business transaction and creating unnecessary friction.

Scattered knowledge across multiple systems makes this problem exponentially worse. When your actual processes live in Slack threads, email chains, and the heads of employees who’ve been there for years, no amount of AI sophistication can bridge that gap.

The Configuration Drift Problem: When Documentation Ages Badly

Even when organizations start with good documentation, there’s another silent killer: configuration drift.

Your systems evolve. Workflows get updated. Teams find workarounds. Exceptions become standard practice. And nobody updates the documentation to reflect reality.

Pavan Madduri, a senior platform engineer at Grainger whose research focuses on governing agentic AI in enterprise IT, points to this as the core flaw in vendor promises that agents can “learn from observing existing workflows.” Observation without context creates incomplete understanding. The agent might replicate the workflow but it won’t understand why the workflow works that way, or when it should deviate.

ServiceNow and similar platforms tout their ability to learn from years of workflows that have run through their systems. The idea is elegant: no documentation required because the agent learns by watching. But that only works if those workflows were correct in the first place and if they haven’t drifted over time into something the original architects wouldn’t recognize.

Real-World Consequences of Documentation Mismatch

This isn’t a theoretical problem. Organizations are losing real money and credibility because their AI agents are following outdated or incomplete documentation.

New York City’s MyCity chatbot became infamous for giving businesses illegal advice telling them they could take workers’ tips, refuse tenants with housing vouchers, and ignore cash acceptance requirements. All violations of actual law. The bot confidently dispensed this misinformation for months after the problems were reported, because its documentation didn’t match legal reality.

Air Canada’s chatbot promised customers a discount policy that didn’t exist, and when a customer held the company to it, a Canadian court ruled that Air Canada was liable for what its agent said. The precedent is worth millions and it’s just the beginning.

In enterprise settings, the damage is often less public but equally expensive. An agent that misinterprets a procurement policy can lock up legitimate transactions. An agent that follows outdated security documentation can create vulnerabilities. An agent that executes based on old workflow diagrams can route approvals to the wrong people, delay critical decisions, or expose sensitive information to unauthorized users.

When your documentation lies about how processes actually work, AI agents don’t just fail — they fail at scale, with speed and consistency that human error could never match.

The Human-Readable vs. Machine-Readable Gap

Most enterprise documentation was written for humans who can:

- Infer context from incomplete information

- Recognize when a rule doesn’t apply to a specific situation

- Ask clarifying questions when something seems off

- Understand implied exceptions based on institutional knowledge

- Fill in gaps using common sense

AI agents can’t do any of that. They need documentation that is:

- Explicit — every exception documented, every edge case covered

- Complete — no gaps that require “just knowing” how things work

- Current — reflecting today’s reality, not last year’s process

- Unambiguous — one clear interpretation, not multiple valid readings

- Structured — organized in a way machines can parse and reference

The gap between these two documentation styles is where most AI agent failures originate. You hand the agent a human-friendly PDF and expect machine-level precision. It doesn’t work.

The Multi-Version Truth Problem

Here’s another pattern that kills AI implementations: when different teams maintain different versions of the “same” process.

Your HR handbook says remote work is encouraged. Your security policy says VPN access for customer data is restricted. Your IT operations guide has a third set of rules. An employee navigating this knows how to synthesize these documents and make a judgment call. An AI agent sees conflicting instructions and either freezes, picks one arbitrarily, or applies the wrong policy in the wrong context.

Why scattered knowledge silently sabotages your AI readiness comes down to this: when there’s no single source of truth, agents can’t learn what “correct” means. They see multiple versions of reality and have no reliable way to choose.

This creates what researchers call “context blindness” when agent responses don’t match your own documentation because the agent is pulling from outdated, incomplete, or conflicting sources.

How to Fix Your Documentation Before Deploying AI Agents

If you’re planning to deploy AI agents or already struggling with implementations that aren’t working — here’s what needs to happen:

Audit your actual processes, not your documented processes. Shadow employees doing the work. Record what they actually do, not what the handbook says they should do. The delta between those two is your documentation debt and it needs to be paid before AI can help.

Map where your process documentation lives. Is it in SharePoint? Confluence? Google Docs? Slack channels? Tribal knowledge? If it’s scattered across multiple systems and formats, consolidate it. Agents need a single, authoritative source they can query reliably.

Version control everything. Your documentation should have the same rigor as your code. Track changes. Review updates. Deprecate outdated versions clearly. An agent following last year’s documentation is worse than an agent with no documentation because it’s confidently wrong.

Document exceptions explicitly. That “everyone just knows” exception? Write it down. Define when it applies. Provide examples. AI agents don’t have institutional memory. If it’s not in the documentation, it doesn’t exist.

Test your documentation with someone who’s never done the job. If they can follow your process documentation from start to finish without asking clarifying questions, you’re close to machine-readable. If they get stuck, confused, or need to make judgment calls based on context clues, your documentation isn’t ready for AI.

Implement continuous documentation maintenance. Every time a process changes, the documentation changes. Not “when someone gets around to it” immediately. Treat documentation like production code: changes require reviews, approvals, and deployment tracking.

The Strategic Question Most Organizations Skip

Here’s the question vendors won’t ask you, but you need to ask yourself: can you describe your critical processes completely and accurately, without relying on “that’s just how we’ve always done it”?

If the answer is no or if there’s significant disagreement among your team about what the “right” process actually is you’re not ready for AI agents. You don’t have a technology problem. You have an organizational clarity problem.

And that’s actually good news, because organizational clarity problems can be fixed. They just need to be fixed before you hand your processes to an autonomous system and tell it to execute at scale.

Building Documentation That Agents Can Actually Use

The future of enterprise documentation isn’t just writing better documents. It’s designing documentation systems that serve both human and machine readers effectively.

This means:

- Structured formats that machines can parse (not just PDFs)

- Linked data connecting related policies, exceptions, and edge cases

- Version history that allows rollback when changes cause problems

- Validation layers that catch conflicts between related documents

- Feedback loops that flag when documented processes diverge from observed behavior

Some organizations are experimenting with AI agents to help maintain documentation using agents to identify drift, flag inconsistencies, and suggest updates based on observed workflows. It’s recursive, yes: using AI to fix the documentation that AI needs to function. But it’s also pragmatic.

Eugene Petrenko documented how 16 AI agents helped refactor documentation for other AI agents to use. The key insight? Documentation quality improved dramatically when evaluated by AI readers instead of human assumptions about what AI needs. The metrics were clear: documents scored 7.0 before refactoring jumped to 9.0 after because the team finally understood what “machine-readable” actually meant.

The Real Cost of Documentation Debt

Organizations rushing to deploy AI agents without fixing their documentation foundations are making an expensive bet. They’re wagering that AI sophistication can overcome organizational chaos. It can’t.

Poor documentation doesn’t become less of a problem when you add AI. It becomes a bigger one. As one practitioner put it: “If you have clean, structured, well-maintained processes, AI makes those faster and easier. If you have chaos, undocumented workarounds, inconsistent data, AI compounds that too. Runs your broken process faster and at higher volume than you ever could manually.”

The agent doesn’t resolve the documentation gap. It scales it.

This is why only 26% of organizations that have implemented AI agents rate them as “completely successful.” The technology works. But the foundations don’t.

What Success Actually Looks Like

Organizations that succeed with AI agents share a common pattern: they invested in documentation excellence before they deployed the first agent.

Snowflake took a data-first approach to AI implementation. Instead of rushing to deploy AI tools across the organization, the company built robust data infrastructure and documentation that AI systems could trust. David Gojo, head of sales data science at Snowflake, emphasizes that successful AI deployments require “accurate, timely information that AI systems can trust.”

The result? AI tools that sales teams actually adopted because the recommendations were backed by reliable data and clear documentation, not generating false confidence from incomplete information.

Your Next Move

If you’re considering AI agents, start with an honest documentation audit. Not the audit where you check if documentation exists the audit where you test if it reflects reality.

Walk through your critical processes. Compare what’s documented to what actually happens. Identify the gaps. Quantify the drift. And be brutally honest about whether your organization can articulate its processes clearly enough for a machine to follow them.

Because here’s the hard truth: if your documentation doesn’t match reality, your AI agents will fail. Not eventually. Immediately. And the failure will be loud, expensive, and difficult to fix after the fact.

The good news? This is fixable. Documentation debt can be paid down. Processes can be clarified. Knowledge can be consolidated. But it needs to happen before you deploy agents — not after they’ve already scaled your broken processes to catastrophic proportions.

The question isn’t whether your organization will invest in documentation quality. The question is whether you’ll do it before or after your AI agents fail publicly.

Read More

Ysquare Technology

20/04/2026

Why Scattered Knowledge Is Killing Your AI Agent Implementation (And What to Do About It)

Your company just invested six figures in AI agents. The promise? Automated workflows, instant answers, lightning-fast decisions. The reality? Your agents keep giving wrong answers, missing critical information, and frustrating your team more than helping them.

Here’s the thing most people miss: It’s not the AI that’s failing. It’s your knowledge.

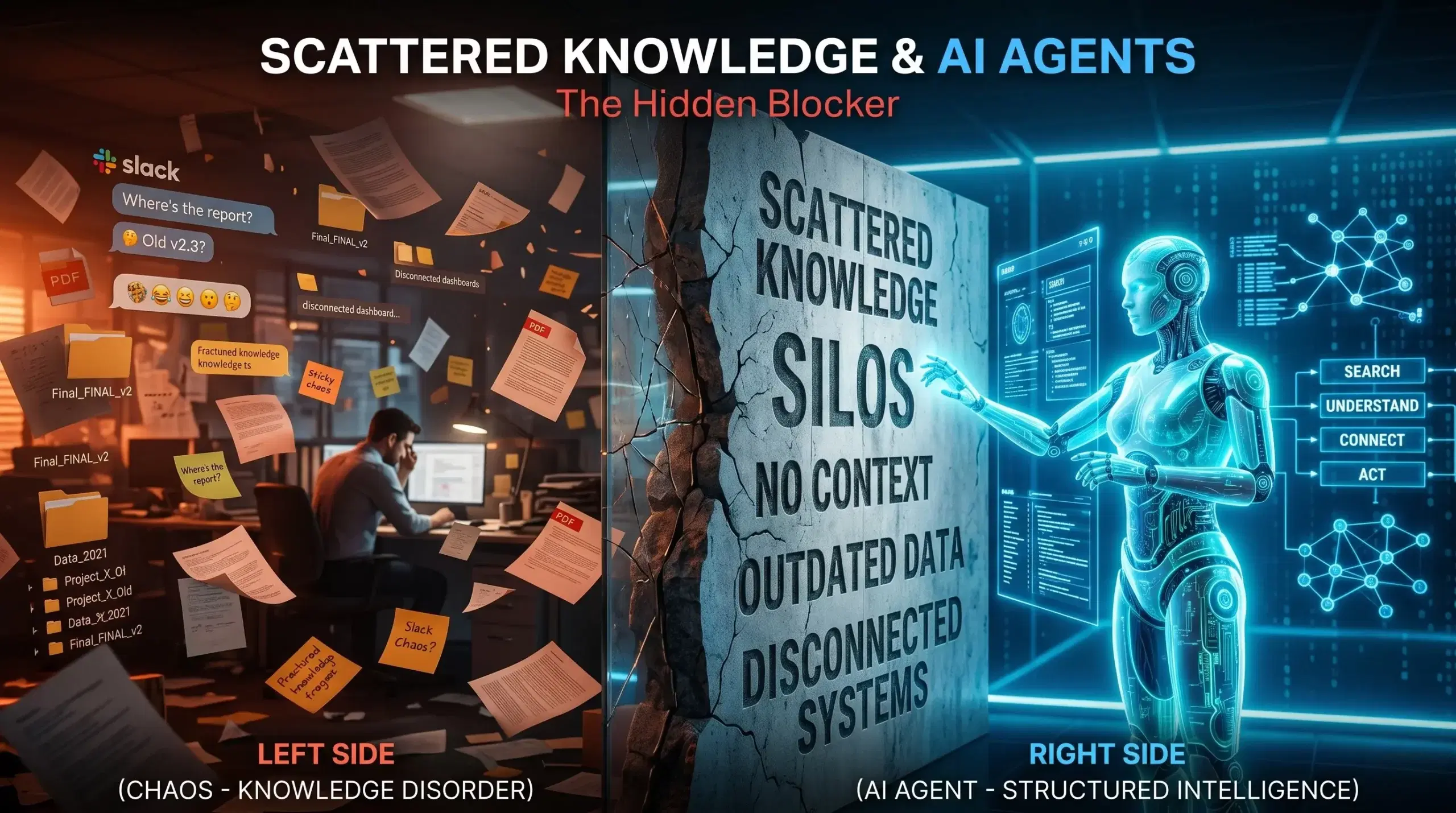

If your information lives across Slack threads, SharePoint sites, Google Docs, email chains, and someone’s desktop folder labeled “Important – Final – FINAL v2,” your AI agents don’t stand a chance. They can’t find what they need because you’ve built a knowledge maze, not a knowledge base.

Let’s be honest about what scattered knowledge really costs you — and more importantly, how to fix it before your AI investment becomes another failed tech initiative.

The Real Cost of Knowledge Chaos in the AI Era

When information sprawls across multiple tools and teams, it creates what experts call “knowledge silos.” Sounds technical. Feels expensive.

Companies lose between $2.4 million to $240 million annually in lost productivity due to knowledge silos, depending on their size and industry. That’s not a rounding error. That’s revenue you could be capturing.

But here’s where it gets worse for organizations deploying AI agents. Employees spend roughly 20% of their workweek — one full day — searching for information or asking colleagues for help. Now multiply that frustration by the speed at which AI agents need to operate.

Traditional employees at least know where to look when they hit a dead end. They know Sarah in Sales probably has that updated pricing deck, or that the engineering team keeps their documentation in Confluence (most of the time). AI agents don’t have that institutional memory. When they encounter scattered knowledge, they simply fail.

According to a 2025 McKinsey study, data silos cost businesses approximately $3.1 trillion annually in lost revenue and productivity. The shift to AI doesn’t solve this problem — it amplifies it.

Why AI Agents Demand Unified Knowledge (Not Just “Good Enough” Documentation)

Think about how your team currently finds information. Someone asks a question in Slack. Three people respond with slightly different answers. Someone else jumps in with “I think that process changed last month.” Eventually, someone digs up a document from 2023 that’s “probably still accurate.”

Humans can navigate this chaos. We read between the lines, verify with subject matter experts, and apply context based on what we know about the business. AI agents can’t do any of that.

When an agent gives the wrong answer, the correct information often exists somewhere in your organization — scattered across SharePoint, Confluence, email chains, and tribal knowledge — but your agent simply can’t find it.

Here’s what makes scattered knowledge particularly destructive for AI implementations:

Information lives in isolation. Your customer service knowledge base hasn’t been updated with the product changes engineering shipped last quarter. Your sales playbook doesn’t reflect the pricing structure finance approved two weeks ago. Each team operates with their own version of truth, and your AI agent has to pick which one to believe.

Unstructured knowledge limits accuracy. AI agents need clean, organized, validated information to function properly. When your knowledge exists as casual Slack conversations, outdated PDFs, and half-finished wiki pages, the fragmentation combined with limitations of manual knowledge capture and organization often results in decreased productivity and missed opportunities for innovation.

Context gets lost. A document sitting in a folder tells an AI agent nothing about whether it’s current, who approved it, or if it’s been superseded by newer information. Unlike structured data which is well organized and more easily processed by AI tools, the sprawling and unverified nature of unstructured data poses tricky problems for agentic tool development.

The “Single Source of Truth” Myth That’s Holding You Back

Every organization says they want a single source of truth. Almost none have one.

What most companies actually have is a “preferred source of truth” (the official wiki that nobody updates) and a “working source of truth” (the Slack channel where real work gets discussed). AI agents need the latter, but they only get trained on the former.

Shared understanding among AI agents could quickly become shared misconception without ongoing maintenance. If you’re feeding your agents outdated documentation while your team operates based on recent conversations and tribal knowledge, you’re setting them up to confidently deliver wrong answers.

The real question isn’t “Where should we centralize everything?” The real question is “How do we keep knowledge current, connected, and contextual across all the places it naturally lives?”

What Good Knowledge Management Actually Looks Like for AI Agents

Companies that successfully deploy AI agents don’t necessarily have less knowledge. They have better-organized knowledge with clear ownership and maintenance processes.

Here’s what separates organizations ready for AI from those still struggling:

Clear ownership of every knowledge asset. Someone owns each piece of information — not just the creation, but the ongoing accuracy. When a product feature changes, there’s a person responsible for updating that knowledge across all relevant systems. No orphaned documents. No “I think someone was supposed to update that.”

Connected information architecture. Your pricing information should automatically flow to sales training materials, customer service scripts, and product documentation. Research shows that sharing knowledge improves productivity by 35%, and employees typically spend 20% of the working week searching for information necessary to their jobs. Connected systems cut that search time dramatically.

Version control that actually works. One of the more significant challenges is identifying the latest, accurate versions to include in AI models, retrieval-augmented generation systems, and AI agents. If your agent can’t tell which version of a document is current, it will default to whatever it finds first — which is often wrong.

Metadata that tells the story. Every document should answer: Who created this? When? Who approved it? When was it last verified? What’s the review schedule? Is this still current? External unstructured data requires thoughtful data engineering to extract and maintain structured metadata such as creation dates, categories, severity levels, and service types.

Active curation, not passive storage. Knowledge curation transforms scattered information into agent-ready intelligence by systematically selecting, prioritizing, and unifying sources. This isn’t a one-time migration project. It’s an ongoing practice of keeping your knowledge ecosystem healthy.

The Hidden Knowledge Gaps That Break AI Agents

Even when organizations think they’ve centralized their knowledge, critical gaps remain. These gaps don’t show up in a content audit, but they destroy AI agent performance:

The expertise that lives in people’s heads. Your senior account manager knows that Enterprise clients get special payment terms, but that’s not documented anywhere. Your lead engineer knows that certain API endpoints are unstable under specific conditions, but the official docs don’t mention it. This tribal knowledge is invisible to AI agents until they fail because of it.

Process knowledge versus documented process. Your official onboarding process says new hires complete training in two weeks. The reality? Managers always extend it to three weeks because two isn’t realistic. When documented processes don’t reflect how work actually happens, the gap leads to incorrect decisions. AI agents trained on official documentation will give answers based on the fantasy version of your processes.

The context that makes information actionable. A discount code might be technically active, but customer service shouldn’t offer it because it’s reserved for churn prevention. A feature might be live, but sales shouldn’t mention it because it’s not ready for general availability. The information alone isn’t enough — AI agents need the context around when and how to use it.

Cross-functional dependencies nobody documented. Marketing launches a campaign that Sales wasn’t looped into. Engineering deprecates an API that Customer Success was using in their workflows. When Team A needs information from Team B to complete their work, but that knowledge stays locked away, projects stall. AI agents can’t navigate these dependencies if they’re not mapped.

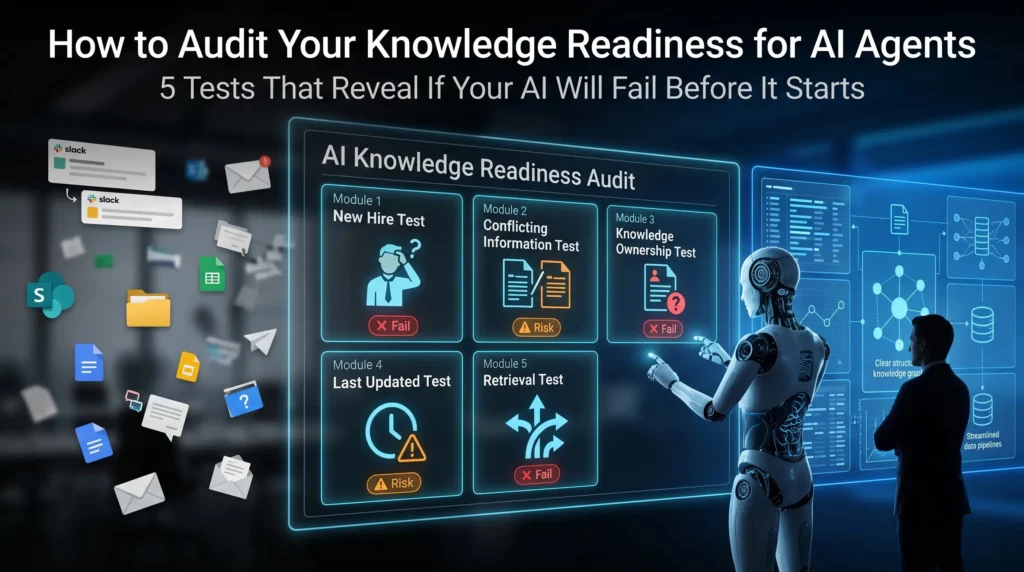

How to Audit Your Knowledge Readiness for AI Agents

Before you invest another dollar in AI implementation, run this diagnostic. It will tell you whether your knowledge infrastructure can actually support autonomous agents:

The “new hire test.” Could a brand new employee find the answer to a routine customer question using only your documented knowledge base? If they’d need to ask three people and dig through Slack history, your AI agent will fail too.

The “conflicting information test.” Search for your return policy across all your systems. How many different versions do you find? If the answer is more than one, your knowledge is fragmented. When different files, tools, and teams create conflicting data, agents struggle when there’s no single reliable source.

The “knowledge owner test.” Pick ten critical documents. Can you identify who owns each one? Who updates them when things change? If the answer is “whoever created it three years ago but they left the company,” you have an ownership problem.

The “last updated test.” Look at your top 20 most-accessed knowledge articles. When were they last reviewed? Anyone who has stumbled across an old SharePoint site or outdated shared folder knows how quickly documentation can fall out of date and become inaccurate. Humans can spot these red flags. AI agents can’t.

The “retrieval test.” Ask five people across different departments to find the same piece of information. How many different places do they look? How long does it take? If everyone has a different search strategy, your knowledge isn’t as organized as you think.

Building an AI-Ready Knowledge Foundation: The Practical Path Forward

Here’s what most consultants won’t tell you: You don’t need to fix everything before deploying AI agents. You need to fix the right things in the right order.

Start with your highest-impact knowledge domains. Where do wrong answers cost you the most? Customer service? Sales enablement? Technical support? Start there. Apply impact filters prioritizing sources that drive revenue, reduce risk, or unblock high-volume tasks. A pricing database enabling deal closure ranks higher than archived meeting notes.

Create a knowledge governance model. Assign clear owners. Establish review cycles. Build update workflows. Unlike traditional knowledge management systems, context-aware AI considers the user role, workflow stage, and policy requirements. Your governance model should support this by ensuring the right information gets to the right agents at the right time.

Connect your knowledge sources, don’t consolidate them. You don’t need to move everything into one system. You need systems that talk to each other. The real value comes from converting fragmented information into contextual, workflow-ready intelligence — not just faster retrieval.

Implement structured metadata. Add consistent tags, categories, and attributes to your knowledge assets. This metadata helps AI agents understand not just what information says, but when it’s relevant, who should use it, and how current it is.

Build feedback loops. Discovery tools should profile content and enable training on your historical data. When your AI agent gives a wrong answer, that should trigger a knowledge review. Wrong answers are symptoms of knowledge gaps — treat them as diagnostic tools.

Invest in knowledge curation, not just content creation. Most organizations have enough knowledge. They don’t have enough organized, validated, accessible knowledge. The key discovery question cuts through organizational assumptions: “When an agent gives the wrong answer, where would a human expert double-check?” This reveals gaps between official documentation and working knowledge.

The Questions Leaders Should Be Asking (But Usually Aren’t)

If you’re a CEO, CTO, or business leader evaluating AI agent readiness, stop asking “What’s the best AI platform?” Start asking these questions instead:

- Can we confidently point to a single authoritative answer for our top 100 business questions?

- When critical information changes, how long does it take to update across all relevant systems?

- If our AI agent answers a customer question incorrectly, could we trace back to why?

- Do we have governance processes for knowledge creation, review, and retirement?

- What percentage of our organizational knowledge exists only in employee heads or informal channels?

The answers to these questions determine whether your AI investment delivers value or becomes another expensive failed experiment.

What Success Actually Looks Like

Organizations that nail knowledge management for AI agents don’t have perfect documentation. They have living, maintained, connected knowledge ecosystems.

AI agents are helping organizations rethink how they capture, organize, and tap into their collective knowledge — acting more like intelligent coworkers able to understand, reason, and take action.

But this only works when the knowledge foundation is solid. When information flows freely across systems. When ownership is clear. When currency is tracked. When context is preserved.

The companies seeing real ROI from AI agents didn’t start with the sexiest AI models. They started by fixing their knowledge infrastructure. They recognized that organizations need trusted, company-specific data for agentic AI to truly create value — the unstructured data inside emails, documents, presentations, and videos.

The Bottom Line

Your AI agents are only as good as the knowledge they can access. Scattered, siloed, outdated information doesn’t become magically useful just because you’ve deployed advanced AI models.

The gap between AI hype and AI reality isn’t about the technology. It’s about the foundation. Companies rushing to implement AI agents without fixing their knowledge infrastructure are building on quicksand.

The good news? Knowledge management is solvable. It’s not a sexy transformation project, but it’s the difference between AI agents that actually work and ones that just frustrate your team.

The question isn’t whether you should fix your scattered knowledge problem. The question is whether you’ll fix it before or after your AI initiative fails.

Read More

Ysquare Technology

20/04/2026