AI Agent Documentation Gap: Why Most Implementations Fail

Let’s be honest you can’t teach an AI agent to do work that nobody can explain clearly. And that’s the exact trap most organizations walk into when deploying AI agents.

The promise sounds incredible: autonomous agents handling customer inquiries, processing approvals, managing workflows all while you sleep. But here’s the catch nobody mentions in the sales pitch: AI agents are only as good as the documentation they’re trained on. And in most enterprises, that documentation was written by humans, for humans, years ago and it hasn’t kept up with how work actually gets done today.

This is the documentation reality gap. Your official process says one thing. Your team does something completely different. And when you hand those outdated documents to an AI agent and tell it to “just follow the process,” you’re not automating efficiency. You’re scaling chaos.

The Documentation Crisis Nobody Wants to Talk About

Process documentation in most enterprises is in terrible shape. Not because anyone intended it that way but because documentation is treated as a compliance checkbox, not a living operational asset.

According to recent research, only 16% of organizations report having extremely well-documented workflows. That means 84% of companies are trying to deploy AI agents on shaky foundations. Even more telling: 49% of organizations admit that undocumented or ad-hoc processes impact their efficiency regularly.

Think about that for a second. Half of all businesses know their processes aren’t properly documented yet they’re still attempting to hand those same processes to autonomous AI systems and expecting success.

The numbers tell the brutal truth: between 80% and 95% of enterprise AI projects fail to deliver meaningful ROI. And while there are multiple reasons for failure, documentation mismatch sits at the core of most disasters.

Why Your Documentation Is Lying to Your AI Agent

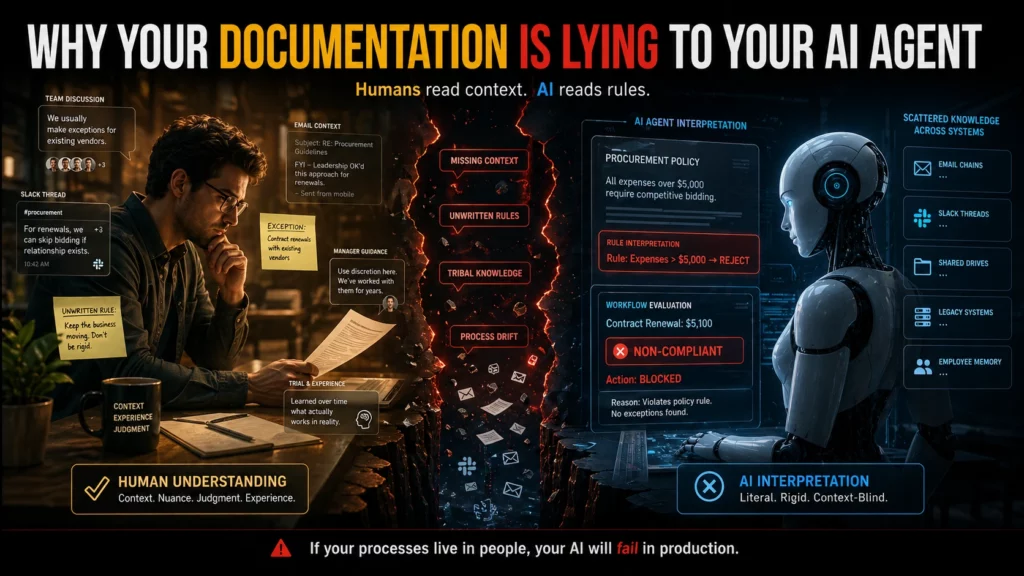

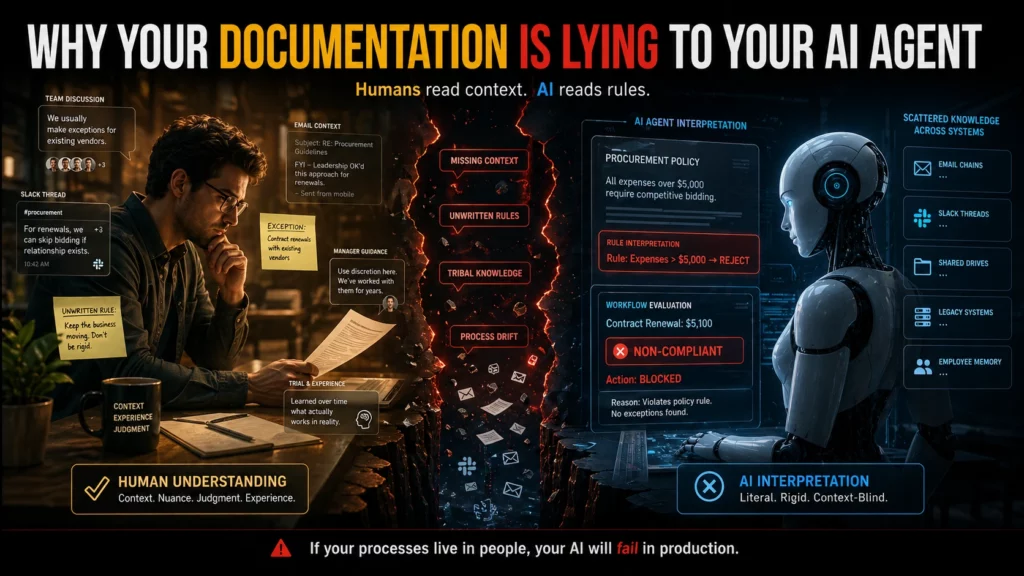

Here’s what most people don’t realize: your company’s documentation wasn’t designed to be machine-readable. It was written by someone who understood the context, the history, the unwritten rules, and the exceptions that “everyone just knows.”

An employee reading your procurement policy understands that when it says “expenses over $5,000 require competitive bidding,” there’s an implicit exception for contract renewals with existing vendors. They know this because someone told them during onboarding, or they watched how their manager handled it, or they learned it through trial and error.

An AI agent reading that same policy? It sees an absolute rule. No exceptions. So when a $5,100 contract renewal comes through, the agent flags it as non-compliant — blocking a routine business transaction and creating unnecessary friction.

Scattered knowledge across multiple systems makes this problem exponentially worse. When your actual processes live in Slack threads, email chains, and the heads of employees who’ve been there for years, no amount of AI sophistication can bridge that gap.

The Configuration Drift Problem: When Documentation Ages Badly

Even when organizations start with good documentation, there’s another silent killer: configuration drift.

Your systems evolve. Workflows get updated. Teams find workarounds. Exceptions become standard practice. And nobody updates the documentation to reflect reality.

Pavan Madduri, a senior platform engineer at Grainger whose research focuses on governing agentic AI in enterprise IT, points to this as the core flaw in vendor promises that agents can “learn from observing existing workflows.” Observation without context creates incomplete understanding. The agent might replicate the workflow but it won’t understand why the workflow works that way, or when it should deviate.

ServiceNow and similar platforms tout their ability to learn from years of workflows that have run through their systems. The idea is elegant: no documentation required because the agent learns by watching. But that only works if those workflows were correct in the first place and if they haven’t drifted over time into something the original architects wouldn’t recognize.

Real-World Consequences of Documentation Mismatch

This isn’t a theoretical problem. Organizations are losing real money and credibility because their AI agents are following outdated or incomplete documentation.

New York City’s MyCity chatbot became infamous for giving businesses illegal advice telling them they could take workers’ tips, refuse tenants with housing vouchers, and ignore cash acceptance requirements. All violations of actual law. The bot confidently dispensed this misinformation for months after the problems were reported, because its documentation didn’t match legal reality.

Air Canada’s chatbot promised customers a discount policy that didn’t exist, and when a customer held the company to it, a Canadian court ruled that Air Canada was liable for what its agent said. The precedent is worth millions and it’s just the beginning.

In enterprise settings, the damage is often less public but equally expensive. An agent that misinterprets a procurement policy can lock up legitimate transactions. An agent that follows outdated security documentation can create vulnerabilities. An agent that executes based on old workflow diagrams can route approvals to the wrong people, delay critical decisions, or expose sensitive information to unauthorized users.

When your documentation lies about how processes actually work, AI agents don’t just fail — they fail at scale, with speed and consistency that human error could never match.

The Human-Readable vs. Machine-Readable Gap

Most enterprise documentation was written for humans who can:

- Infer context from incomplete information

- Recognize when a rule doesn’t apply to a specific situation

- Ask clarifying questions when something seems off

- Understand implied exceptions based on institutional knowledge

- Fill in gaps using common sense

AI agents can’t do any of that. They need documentation that is:

- Explicit — every exception documented, every edge case covered

- Complete — no gaps that require “just knowing” how things work

- Current — reflecting today’s reality, not last year’s process

- Unambiguous — one clear interpretation, not multiple valid readings

- Structured — organized in a way machines can parse and reference

The gap between these two documentation styles is where most AI agent failures originate. You hand the agent a human-friendly PDF and expect machine-level precision. It doesn’t work.

The Multi-Version Truth Problem

Here’s another pattern that kills AI implementations: when different teams maintain different versions of the “same” process.

Your HR handbook says remote work is encouraged. Your security policy says VPN access for customer data is restricted. Your IT operations guide has a third set of rules. An employee navigating this knows how to synthesize these documents and make a judgment call. An AI agent sees conflicting instructions and either freezes, picks one arbitrarily, or applies the wrong policy in the wrong context.

Why scattered knowledge silently sabotages your AI readiness comes down to this: when there’s no single source of truth, agents can’t learn what “correct” means. They see multiple versions of reality and have no reliable way to choose.

This creates what researchers call “context blindness” when agent responses don’t match your own documentation because the agent is pulling from outdated, incomplete, or conflicting sources.

How to Fix Your Documentation Before Deploying AI Agents

If you’re planning to deploy AI agents or already struggling with implementations that aren’t working — here’s what needs to happen:

Audit your actual processes, not your documented processes. Shadow employees doing the work. Record what they actually do, not what the handbook says they should do. The delta between those two is your documentation debt and it needs to be paid before AI can help.

Map where your process documentation lives. Is it in SharePoint? Confluence? Google Docs? Slack channels? Tribal knowledge? If it’s scattered across multiple systems and formats, consolidate it. Agents need a single, authoritative source they can query reliably.

Version control everything. Your documentation should have the same rigor as your code. Track changes. Review updates. Deprecate outdated versions clearly. An agent following last year’s documentation is worse than an agent with no documentation because it’s confidently wrong.

Document exceptions explicitly. That “everyone just knows” exception? Write it down. Define when it applies. Provide examples. AI agents don’t have institutional memory. If it’s not in the documentation, it doesn’t exist.

Test your documentation with someone who’s never done the job. If they can follow your process documentation from start to finish without asking clarifying questions, you’re close to machine-readable. If they get stuck, confused, or need to make judgment calls based on context clues, your documentation isn’t ready for AI.

Implement continuous documentation maintenance. Every time a process changes, the documentation changes. Not “when someone gets around to it” immediately. Treat documentation like production code: changes require reviews, approvals, and deployment tracking.

The Strategic Question Most Organizations Skip

Here’s the question vendors won’t ask you, but you need to ask yourself: can you describe your critical processes completely and accurately, without relying on “that’s just how we’ve always done it”?

If the answer is no or if there’s significant disagreement among your team about what the “right” process actually is you’re not ready for AI agents. You don’t have a technology problem. You have an organizational clarity problem.

And that’s actually good news, because organizational clarity problems can be fixed. They just need to be fixed before you hand your processes to an autonomous system and tell it to execute at scale.

Building Documentation That Agents Can Actually Use

The future of enterprise documentation isn’t just writing better documents. It’s designing documentation systems that serve both human and machine readers effectively.

This means:

- Structured formats that machines can parse (not just PDFs)

- Linked data connecting related policies, exceptions, and edge cases

- Version history that allows rollback when changes cause problems

- Validation layers that catch conflicts between related documents

- Feedback loops that flag when documented processes diverge from observed behavior

Some organizations are experimenting with AI agents to help maintain documentation using agents to identify drift, flag inconsistencies, and suggest updates based on observed workflows. It’s recursive, yes: using AI to fix the documentation that AI needs to function. But it’s also pragmatic.

Eugene Petrenko documented how 16 AI agents helped refactor documentation for other AI agents to use. The key insight? Documentation quality improved dramatically when evaluated by AI readers instead of human assumptions about what AI needs. The metrics were clear: documents scored 7.0 before refactoring jumped to 9.0 after because the team finally understood what “machine-readable” actually meant.

The Real Cost of Documentation Debt

Organizations rushing to deploy AI agents without fixing their documentation foundations are making an expensive bet. They’re wagering that AI sophistication can overcome organizational chaos. It can’t.

Poor documentation doesn’t become less of a problem when you add AI. It becomes a bigger one. As one practitioner put it: “If you have clean, structured, well-maintained processes, AI makes those faster and easier. If you have chaos, undocumented workarounds, inconsistent data, AI compounds that too. Runs your broken process faster and at higher volume than you ever could manually.”

The agent doesn’t resolve the documentation gap. It scales it.

This is why only 26% of organizations that have implemented AI agents rate them as “completely successful.” The technology works. But the foundations don’t.

What Success Actually Looks Like

Organizations that succeed with AI agents share a common pattern: they invested in documentation excellence before they deployed the first agent.

Snowflake took a data-first approach to AI implementation. Instead of rushing to deploy AI tools across the organization, the company built robust data infrastructure and documentation that AI systems could trust. David Gojo, head of sales data science at Snowflake, emphasizes that successful AI deployments require “accurate, timely information that AI systems can trust.”

The result? AI tools that sales teams actually adopted because the recommendations were backed by reliable data and clear documentation, not generating false confidence from incomplete information.

Your Next Move

If you’re considering AI agents, start with an honest documentation audit. Not the audit where you check if documentation exists the audit where you test if it reflects reality.

Walk through your critical processes. Compare what’s documented to what actually happens. Identify the gaps. Quantify the drift. And be brutally honest about whether your organization can articulate its processes clearly enough for a machine to follow them.

Because here’s the hard truth: if your documentation doesn’t match reality, your AI agents will fail. Not eventually. Immediately. And the failure will be loud, expensive, and difficult to fix after the fact.

The good news? This is fixable. Documentation debt can be paid down. Processes can be clarified. Knowledge can be consolidated. But it needs to happen before you deploy agents — not after they’ve already scaled your broken processes to catastrophic proportions.

The question isn’t whether your organization will invest in documentation quality. The question is whether you’ll do it before or after your AI agents fail publicly.

Frequently Asked Questions

1. What is the documentation reality gap in AI agent implementation?

The documentation reality gap occurs when your organization's official process documentation doesn't match how work is actually performed. This mismatch causes AI agents to follow outdated or incomplete instructions, leading to errors, inefficiencies, and failed implementations. While humans can bridge this gap using context and institutional knowledge, AI agents execute based solely on what's documented.

2. Why do most enterprise AI projects fail despite advanced technology?

Between 80-95% of enterprise AI projects fail primarily due to organizational readiness issues, not technology limitations. Poor documentation quality, scattered knowledge across systems, undocumented workflows, and the gap between documented processes and actual practices create foundations too unstable for AI agents to operate effectively. Only 16% of organizations have extremely well-documented workflows.

3. How does configuration drift impact AI agent performance?

Configuration drift happens when systems, workflows, and processes evolve over time but documentation isn't updated to reflect these changes. AI agents trained on outdated documentation will execute obsolete processes confidently, creating inefficiencies or errors. Unlike humans who adapt to changes organically, agents need documentation that accurately reflects current reality.

4. What's the difference between human-readable and machine-readable documentation?

Human-readable documentation relies on context, implied exceptions, and institutional knowledge that employees understand intuitively. Machine-readable documentation must be explicit, complete, unambiguous, and structured so AI agents can parse and execute processes without human interpretation. Most enterprise documentation is written for humans and requires significant restructuring for AI consumption.

5. How can organizations audit their documentation for AI readiness?

Conduct a documentation audit by shadowing employees performing critical processes and comparing their actual workflows to documented procedures. Test documentation by having someone unfamiliar with the process follow it without asking clarifying questions. Identify gaps, exceptions, and tribal knowledge that exist in practice but not in documentation. Measure the delta between "what the handbook says" and "what people actually do."

6. What are the real-world risks of deploying AI agents with poor documentation?

Organizations face legal liability (as seen in the Air Canada chatbot case), regulatory violations (like NYC's MyCity chatbot giving illegal business advice), operational disruptions from blocked transactions, security vulnerabilities from following outdated protocols, and reputational damage. AI agents scale these problems rapidly because they execute incorrect processes with speed and consistency that human error never could.

7. How does scattered knowledge across multiple systems sabotage AI implementations?

When process documentation lives across Slack threads, email chains, SharePoint, Confluence, Google Docs, and employee memories, AI agents cannot access a single source of truth. This creates conflicting instructions, incomplete context, and the "multiple versions of truth" problem where agents cannot determine which documentation is authoritative, leading to inconsistent or incorrect execution.

8. What documentation practices are essential before deploying AI agents?

Implement version control for all documentation, consolidate knowledge into single authoritative sources, document all exceptions and edge cases explicitly, establish continuous documentation maintenance workflows, create structured formats that machines can parse, test documentation with unfamiliar users, and maintain linked data connecting related policies and procedures.

9. Can AI agents help improve documentation quality?

Yes — some organizations use AI agents to maintain documentation by identifying drift between documented and observed processes, flagging inconsistencies across related documents, and suggesting updates. This creates a recursive improvement loop where AI helps create the documentation quality that AI systems need to function effectively. Eugene Petrenko documented how 16 AI agents improved documentation scores from 7.0 to 9.0 by evaluating machine-readability.

10. What's the first step organizations should take to prepare documentation for AI agents?

Start with an honest audit that tests whether documentation reflects current reality, not just whether it exists. Walk through critical processes, compare documented procedures to actual workflows, identify gaps and drift, and quantify the documentation debt. Address this organizational clarity problem before deploying AI agents — fixing documentation after agents have scaled broken processes is far more expensive and disruptive.

AI Agent Documentation Gap: Why Most Implementations Fail

Let’s be honest you can’t teach an AI agent to do work that nobody can explain clearly. And that’s the exact trap most organizations walk into when deploying AI agents.

The promise sounds incredible: autonomous agents handling customer inquiries, processing approvals, managing workflows all while you sleep. But here’s the catch nobody mentions in the sales pitch: AI agents are only as good as the documentation they’re trained on. And in most enterprises, that documentation was written by humans, for humans, years ago and it hasn’t kept up with how work actually gets done today.

This is the documentation reality gap. Your official process says one thing. Your team does something completely different. And when you hand those outdated documents to an AI agent and tell it to “just follow the process,” you’re not automating efficiency. You’re scaling chaos.

The Documentation Crisis Nobody Wants to Talk About

Process documentation in most enterprises is in terrible shape. Not because anyone intended it that way but because documentation is treated as a compliance checkbox, not a living operational asset.

According to recent research, only 16% of organizations report having extremely well-documented workflows. That means 84% of companies are trying to deploy AI agents on shaky foundations. Even more telling: 49% of organizations admit that undocumented or ad-hoc processes impact their efficiency regularly.

Think about that for a second. Half of all businesses know their processes aren’t properly documented yet they’re still attempting to hand those same processes to autonomous AI systems and expecting success.

The numbers tell the brutal truth: between 80% and 95% of enterprise AI projects fail to deliver meaningful ROI. And while there are multiple reasons for failure, documentation mismatch sits at the core of most disasters.

Why Your Documentation Is Lying to Your AI Agent

Here’s what most people don’t realize: your company’s documentation wasn’t designed to be machine-readable. It was written by someone who understood the context, the history, the unwritten rules, and the exceptions that “everyone just knows.”

An employee reading your procurement policy understands that when it says “expenses over $5,000 require competitive bidding,” there’s an implicit exception for contract renewals with existing vendors. They know this because someone told them during onboarding, or they watched how their manager handled it, or they learned it through trial and error.

An AI agent reading that same policy? It sees an absolute rule. No exceptions. So when a $5,100 contract renewal comes through, the agent flags it as non-compliant — blocking a routine business transaction and creating unnecessary friction.

Scattered knowledge across multiple systems makes this problem exponentially worse. When your actual processes live in Slack threads, email chains, and the heads of employees who’ve been there for years, no amount of AI sophistication can bridge that gap.

The Configuration Drift Problem: When Documentation Ages Badly

Even when organizations start with good documentation, there’s another silent killer: configuration drift.

Your systems evolve. Workflows get updated. Teams find workarounds. Exceptions become standard practice. And nobody updates the documentation to reflect reality.

Pavan Madduri, a senior platform engineer at Grainger whose research focuses on governing agentic AI in enterprise IT, points to this as the core flaw in vendor promises that agents can “learn from observing existing workflows.” Observation without context creates incomplete understanding. The agent might replicate the workflow but it won’t understand why the workflow works that way, or when it should deviate.

ServiceNow and similar platforms tout their ability to learn from years of workflows that have run through their systems. The idea is elegant: no documentation required because the agent learns by watching. But that only works if those workflows were correct in the first place and if they haven’t drifted over time into something the original architects wouldn’t recognize.

Real-World Consequences of Documentation Mismatch

This isn’t a theoretical problem. Organizations are losing real money and credibility because their AI agents are following outdated or incomplete documentation.

New York City’s MyCity chatbot became infamous for giving businesses illegal advice telling them they could take workers’ tips, refuse tenants with housing vouchers, and ignore cash acceptance requirements. All violations of actual law. The bot confidently dispensed this misinformation for months after the problems were reported, because its documentation didn’t match legal reality.

Air Canada’s chatbot promised customers a discount policy that didn’t exist, and when a customer held the company to it, a Canadian court ruled that Air Canada was liable for what its agent said. The precedent is worth millions and it’s just the beginning.

In enterprise settings, the damage is often less public but equally expensive. An agent that misinterprets a procurement policy can lock up legitimate transactions. An agent that follows outdated security documentation can create vulnerabilities. An agent that executes based on old workflow diagrams can route approvals to the wrong people, delay critical decisions, or expose sensitive information to unauthorized users.

When your documentation lies about how processes actually work, AI agents don’t just fail — they fail at scale, with speed and consistency that human error could never match.

The Human-Readable vs. Machine-Readable Gap

Most enterprise documentation was written for humans who can:

- Infer context from incomplete information

- Recognize when a rule doesn’t apply to a specific situation

- Ask clarifying questions when something seems off

- Understand implied exceptions based on institutional knowledge

- Fill in gaps using common sense

AI agents can’t do any of that. They need documentation that is:

- Explicit — every exception documented, every edge case covered

- Complete — no gaps that require “just knowing” how things work

- Current — reflecting today’s reality, not last year’s process

- Unambiguous — one clear interpretation, not multiple valid readings

- Structured — organized in a way machines can parse and reference

The gap between these two documentation styles is where most AI agent failures originate. You hand the agent a human-friendly PDF and expect machine-level precision. It doesn’t work.

The Multi-Version Truth Problem

Here’s another pattern that kills AI implementations: when different teams maintain different versions of the “same” process.

Your HR handbook says remote work is encouraged. Your security policy says VPN access for customer data is restricted. Your IT operations guide has a third set of rules. An employee navigating this knows how to synthesize these documents and make a judgment call. An AI agent sees conflicting instructions and either freezes, picks one arbitrarily, or applies the wrong policy in the wrong context.

Why scattered knowledge silently sabotages your AI readiness comes down to this: when there’s no single source of truth, agents can’t learn what “correct” means. They see multiple versions of reality and have no reliable way to choose.

This creates what researchers call “context blindness” when agent responses don’t match your own documentation because the agent is pulling from outdated, incomplete, or conflicting sources.

How to Fix Your Documentation Before Deploying AI Agents

If you’re planning to deploy AI agents or already struggling with implementations that aren’t working — here’s what needs to happen:

Audit your actual processes, not your documented processes. Shadow employees doing the work. Record what they actually do, not what the handbook says they should do. The delta between those two is your documentation debt and it needs to be paid before AI can help.

Map where your process documentation lives. Is it in SharePoint? Confluence? Google Docs? Slack channels? Tribal knowledge? If it’s scattered across multiple systems and formats, consolidate it. Agents need a single, authoritative source they can query reliably.

Version control everything. Your documentation should have the same rigor as your code. Track changes. Review updates. Deprecate outdated versions clearly. An agent following last year’s documentation is worse than an agent with no documentation because it’s confidently wrong.

Document exceptions explicitly. That “everyone just knows” exception? Write it down. Define when it applies. Provide examples. AI agents don’t have institutional memory. If it’s not in the documentation, it doesn’t exist.

Test your documentation with someone who’s never done the job. If they can follow your process documentation from start to finish without asking clarifying questions, you’re close to machine-readable. If they get stuck, confused, or need to make judgment calls based on context clues, your documentation isn’t ready for AI.

Implement continuous documentation maintenance. Every time a process changes, the documentation changes. Not “when someone gets around to it” immediately. Treat documentation like production code: changes require reviews, approvals, and deployment tracking.

The Strategic Question Most Organizations Skip

Here’s the question vendors won’t ask you, but you need to ask yourself: can you describe your critical processes completely and accurately, without relying on “that’s just how we’ve always done it”?

If the answer is no or if there’s significant disagreement among your team about what the “right” process actually is you’re not ready for AI agents. You don’t have a technology problem. You have an organizational clarity problem.

And that’s actually good news, because organizational clarity problems can be fixed. They just need to be fixed before you hand your processes to an autonomous system and tell it to execute at scale.

Building Documentation That Agents Can Actually Use

The future of enterprise documentation isn’t just writing better documents. It’s designing documentation systems that serve both human and machine readers effectively.

This means:

- Structured formats that machines can parse (not just PDFs)

- Linked data connecting related policies, exceptions, and edge cases

- Version history that allows rollback when changes cause problems

- Validation layers that catch conflicts between related documents

- Feedback loops that flag when documented processes diverge from observed behavior

Some organizations are experimenting with AI agents to help maintain documentation using agents to identify drift, flag inconsistencies, and suggest updates based on observed workflows. It’s recursive, yes: using AI to fix the documentation that AI needs to function. But it’s also pragmatic.

Eugene Petrenko documented how 16 AI agents helped refactor documentation for other AI agents to use. The key insight? Documentation quality improved dramatically when evaluated by AI readers instead of human assumptions about what AI needs. The metrics were clear: documents scored 7.0 before refactoring jumped to 9.0 after because the team finally understood what “machine-readable” actually meant.

The Real Cost of Documentation Debt

Organizations rushing to deploy AI agents without fixing their documentation foundations are making an expensive bet. They’re wagering that AI sophistication can overcome organizational chaos. It can’t.

Poor documentation doesn’t become less of a problem when you add AI. It becomes a bigger one. As one practitioner put it: “If you have clean, structured, well-maintained processes, AI makes those faster and easier. If you have chaos, undocumented workarounds, inconsistent data, AI compounds that too. Runs your broken process faster and at higher volume than you ever could manually.”

The agent doesn’t resolve the documentation gap. It scales it.

This is why only 26% of organizations that have implemented AI agents rate them as “completely successful.” The technology works. But the foundations don’t.

What Success Actually Looks Like

Organizations that succeed with AI agents share a common pattern: they invested in documentation excellence before they deployed the first agent.

Snowflake took a data-first approach to AI implementation. Instead of rushing to deploy AI tools across the organization, the company built robust data infrastructure and documentation that AI systems could trust. David Gojo, head of sales data science at Snowflake, emphasizes that successful AI deployments require “accurate, timely information that AI systems can trust.”

The result? AI tools that sales teams actually adopted because the recommendations were backed by reliable data and clear documentation, not generating false confidence from incomplete information.

Your Next Move

If you’re considering AI agents, start with an honest documentation audit. Not the audit where you check if documentation exists the audit where you test if it reflects reality.

Walk through your critical processes. Compare what’s documented to what actually happens. Identify the gaps. Quantify the drift. And be brutally honest about whether your organization can articulate its processes clearly enough for a machine to follow them.

Because here’s the hard truth: if your documentation doesn’t match reality, your AI agents will fail. Not eventually. Immediately. And the failure will be loud, expensive, and difficult to fix after the fact.

The good news? This is fixable. Documentation debt can be paid down. Processes can be clarified. Knowledge can be consolidated. But it needs to happen before you deploy agents — not after they’ve already scaled your broken processes to catastrophic proportions.

The question isn’t whether your organization will invest in documentation quality. The question is whether you’ll do it before or after your AI agents fail publicly.

Read More

Ysquare Technology

20/04/2026

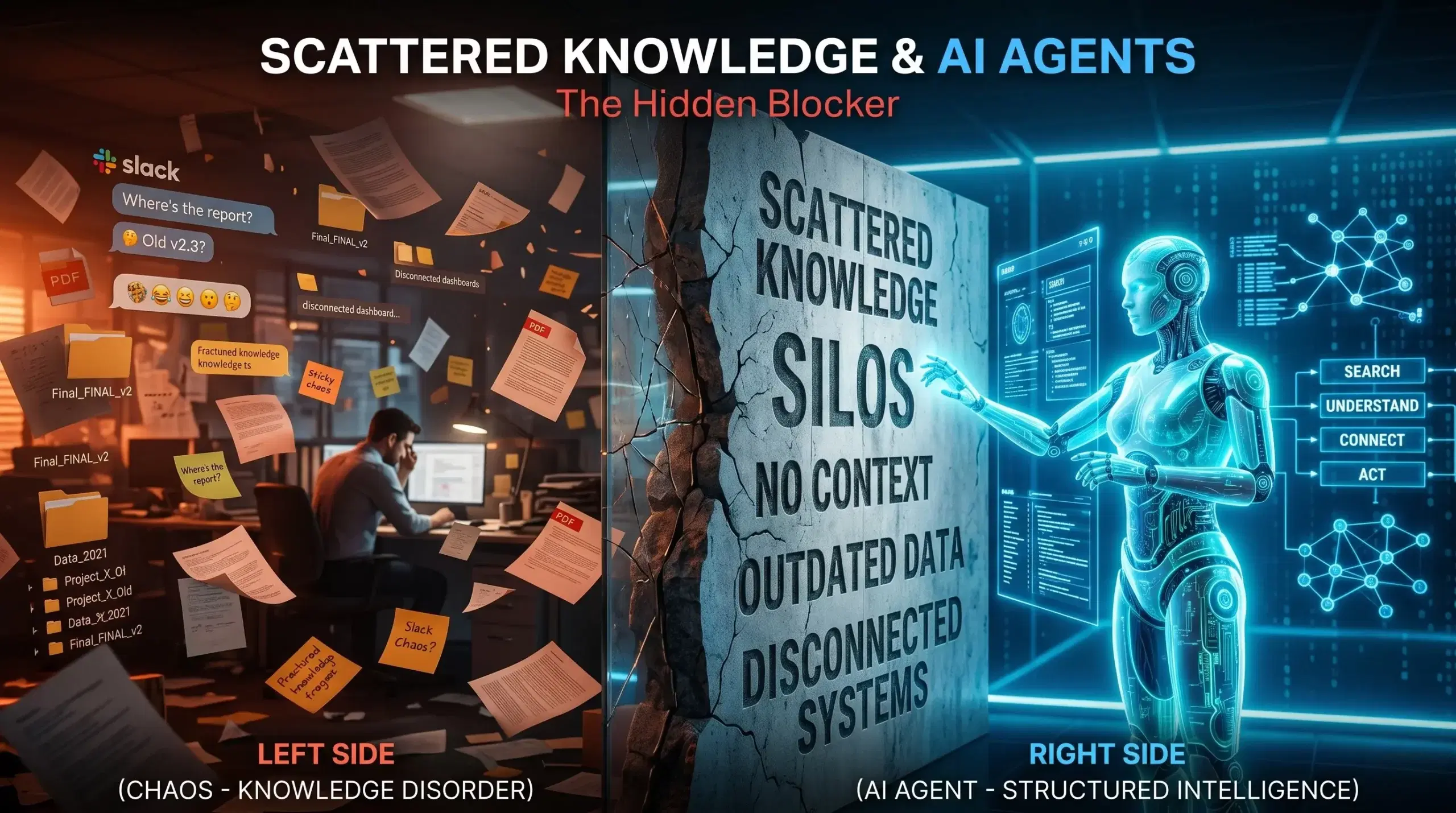

Why Scattered Knowledge Is Killing Your AI Agent Implementation (And What to Do About It)

Your company just invested six figures in AI agents. The promise? Automated workflows, instant answers, lightning-fast decisions. The reality? Your agents keep giving wrong answers, missing critical information, and frustrating your team more than helping them.

Here’s the thing most people miss: It’s not the AI that’s failing. It’s your knowledge.

If your information lives across Slack threads, SharePoint sites, Google Docs, email chains, and someone’s desktop folder labeled “Important – Final – FINAL v2,” your AI agents don’t stand a chance. They can’t find what they need because you’ve built a knowledge maze, not a knowledge base.

Let’s be honest about what scattered knowledge really costs you — and more importantly, how to fix it before your AI investment becomes another failed tech initiative.

The Real Cost of Knowledge Chaos in the AI Era

When information sprawls across multiple tools and teams, it creates what experts call “knowledge silos.” Sounds technical. Feels expensive.

Companies lose between $2.4 million to $240 million annually in lost productivity due to knowledge silos, depending on their size and industry. That’s not a rounding error. That’s revenue you could be capturing.

But here’s where it gets worse for organizations deploying AI agents. Employees spend roughly 20% of their workweek — one full day — searching for information or asking colleagues for help. Now multiply that frustration by the speed at which AI agents need to operate.

Traditional employees at least know where to look when they hit a dead end. They know Sarah in Sales probably has that updated pricing deck, or that the engineering team keeps their documentation in Confluence (most of the time). AI agents don’t have that institutional memory. When they encounter scattered knowledge, they simply fail.

According to a 2025 McKinsey study, data silos cost businesses approximately $3.1 trillion annually in lost revenue and productivity. The shift to AI doesn’t solve this problem — it amplifies it.

Why AI Agents Demand Unified Knowledge (Not Just “Good Enough” Documentation)

Think about how your team currently finds information. Someone asks a question in Slack. Three people respond with slightly different answers. Someone else jumps in with “I think that process changed last month.” Eventually, someone digs up a document from 2023 that’s “probably still accurate.”

Humans can navigate this chaos. We read between the lines, verify with subject matter experts, and apply context based on what we know about the business. AI agents can’t do any of that.

When an agent gives the wrong answer, the correct information often exists somewhere in your organization — scattered across SharePoint, Confluence, email chains, and tribal knowledge — but your agent simply can’t find it.

Here’s what makes scattered knowledge particularly destructive for AI implementations:

Information lives in isolation. Your customer service knowledge base hasn’t been updated with the product changes engineering shipped last quarter. Your sales playbook doesn’t reflect the pricing structure finance approved two weeks ago. Each team operates with their own version of truth, and your AI agent has to pick which one to believe.

Unstructured knowledge limits accuracy. AI agents need clean, organized, validated information to function properly. When your knowledge exists as casual Slack conversations, outdated PDFs, and half-finished wiki pages, the fragmentation combined with limitations of manual knowledge capture and organization often results in decreased productivity and missed opportunities for innovation.

Context gets lost. A document sitting in a folder tells an AI agent nothing about whether it’s current, who approved it, or if it’s been superseded by newer information. Unlike structured data which is well organized and more easily processed by AI tools, the sprawling and unverified nature of unstructured data poses tricky problems for agentic tool development.

The “Single Source of Truth” Myth That’s Holding You Back

Every organization says they want a single source of truth. Almost none have one.

What most companies actually have is a “preferred source of truth” (the official wiki that nobody updates) and a “working source of truth” (the Slack channel where real work gets discussed). AI agents need the latter, but they only get trained on the former.

Shared understanding among AI agents could quickly become shared misconception without ongoing maintenance. If you’re feeding your agents outdated documentation while your team operates based on recent conversations and tribal knowledge, you’re setting them up to confidently deliver wrong answers.

The real question isn’t “Where should we centralize everything?” The real question is “How do we keep knowledge current, connected, and contextual across all the places it naturally lives?”

What Good Knowledge Management Actually Looks Like for AI Agents

Companies that successfully deploy AI agents don’t necessarily have less knowledge. They have better-organized knowledge with clear ownership and maintenance processes.

Here’s what separates organizations ready for AI from those still struggling:

Clear ownership of every knowledge asset. Someone owns each piece of information — not just the creation, but the ongoing accuracy. When a product feature changes, there’s a person responsible for updating that knowledge across all relevant systems. No orphaned documents. No “I think someone was supposed to update that.”

Connected information architecture. Your pricing information should automatically flow to sales training materials, customer service scripts, and product documentation. Research shows that sharing knowledge improves productivity by 35%, and employees typically spend 20% of the working week searching for information necessary to their jobs. Connected systems cut that search time dramatically.

Version control that actually works. One of the more significant challenges is identifying the latest, accurate versions to include in AI models, retrieval-augmented generation systems, and AI agents. If your agent can’t tell which version of a document is current, it will default to whatever it finds first — which is often wrong.

Metadata that tells the story. Every document should answer: Who created this? When? Who approved it? When was it last verified? What’s the review schedule? Is this still current? External unstructured data requires thoughtful data engineering to extract and maintain structured metadata such as creation dates, categories, severity levels, and service types.

Active curation, not passive storage. Knowledge curation transforms scattered information into agent-ready intelligence by systematically selecting, prioritizing, and unifying sources. This isn’t a one-time migration project. It’s an ongoing practice of keeping your knowledge ecosystem healthy.

The Hidden Knowledge Gaps That Break AI Agents

Even when organizations think they’ve centralized their knowledge, critical gaps remain. These gaps don’t show up in a content audit, but they destroy AI agent performance:

The expertise that lives in people’s heads. Your senior account manager knows that Enterprise clients get special payment terms, but that’s not documented anywhere. Your lead engineer knows that certain API endpoints are unstable under specific conditions, but the official docs don’t mention it. This tribal knowledge is invisible to AI agents until they fail because of it.

Process knowledge versus documented process. Your official onboarding process says new hires complete training in two weeks. The reality? Managers always extend it to three weeks because two isn’t realistic. When documented processes don’t reflect how work actually happens, the gap leads to incorrect decisions. AI agents trained on official documentation will give answers based on the fantasy version of your processes.

The context that makes information actionable. A discount code might be technically active, but customer service shouldn’t offer it because it’s reserved for churn prevention. A feature might be live, but sales shouldn’t mention it because it’s not ready for general availability. The information alone isn’t enough — AI agents need the context around when and how to use it.

Cross-functional dependencies nobody documented. Marketing launches a campaign that Sales wasn’t looped into. Engineering deprecates an API that Customer Success was using in their workflows. When Team A needs information from Team B to complete their work, but that knowledge stays locked away, projects stall. AI agents can’t navigate these dependencies if they’re not mapped.

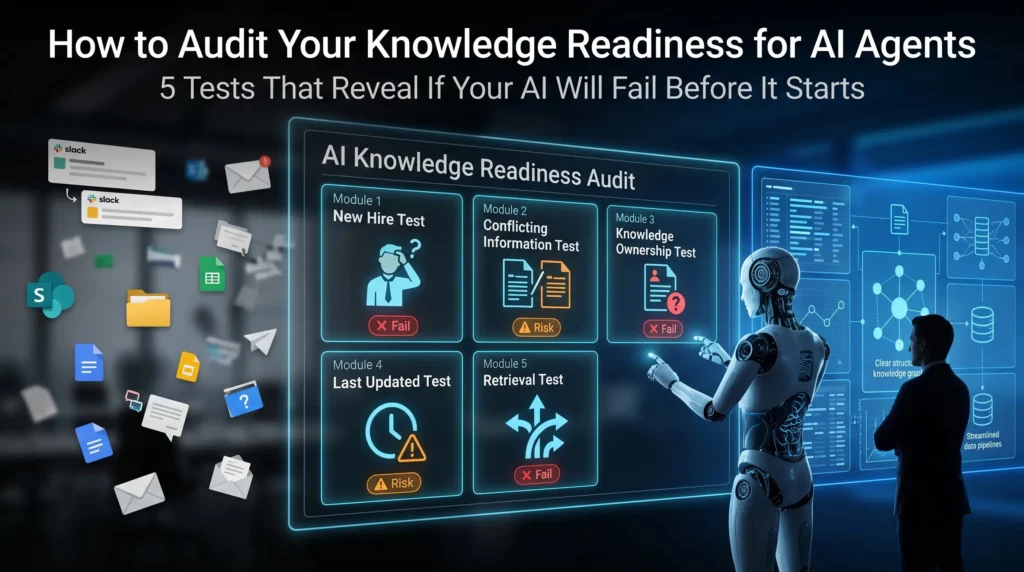

How to Audit Your Knowledge Readiness for AI Agents

Before you invest another dollar in AI implementation, run this diagnostic. It will tell you whether your knowledge infrastructure can actually support autonomous agents:

The “new hire test.” Could a brand new employee find the answer to a routine customer question using only your documented knowledge base? If they’d need to ask three people and dig through Slack history, your AI agent will fail too.

The “conflicting information test.” Search for your return policy across all your systems. How many different versions do you find? If the answer is more than one, your knowledge is fragmented. When different files, tools, and teams create conflicting data, agents struggle when there’s no single reliable source.

The “knowledge owner test.” Pick ten critical documents. Can you identify who owns each one? Who updates them when things change? If the answer is “whoever created it three years ago but they left the company,” you have an ownership problem.

The “last updated test.” Look at your top 20 most-accessed knowledge articles. When were they last reviewed? Anyone who has stumbled across an old SharePoint site or outdated shared folder knows how quickly documentation can fall out of date and become inaccurate. Humans can spot these red flags. AI agents can’t.

The “retrieval test.” Ask five people across different departments to find the same piece of information. How many different places do they look? How long does it take? If everyone has a different search strategy, your knowledge isn’t as organized as you think.

Building an AI-Ready Knowledge Foundation: The Practical Path Forward

Here’s what most consultants won’t tell you: You don’t need to fix everything before deploying AI agents. You need to fix the right things in the right order.

Start with your highest-impact knowledge domains. Where do wrong answers cost you the most? Customer service? Sales enablement? Technical support? Start there. Apply impact filters prioritizing sources that drive revenue, reduce risk, or unblock high-volume tasks. A pricing database enabling deal closure ranks higher than archived meeting notes.

Create a knowledge governance model. Assign clear owners. Establish review cycles. Build update workflows. Unlike traditional knowledge management systems, context-aware AI considers the user role, workflow stage, and policy requirements. Your governance model should support this by ensuring the right information gets to the right agents at the right time.

Connect your knowledge sources, don’t consolidate them. You don’t need to move everything into one system. You need systems that talk to each other. The real value comes from converting fragmented information into contextual, workflow-ready intelligence — not just faster retrieval.

Implement structured metadata. Add consistent tags, categories, and attributes to your knowledge assets. This metadata helps AI agents understand not just what information says, but when it’s relevant, who should use it, and how current it is.

Build feedback loops. Discovery tools should profile content and enable training on your historical data. When your AI agent gives a wrong answer, that should trigger a knowledge review. Wrong answers are symptoms of knowledge gaps — treat them as diagnostic tools.

Invest in knowledge curation, not just content creation. Most organizations have enough knowledge. They don’t have enough organized, validated, accessible knowledge. The key discovery question cuts through organizational assumptions: “When an agent gives the wrong answer, where would a human expert double-check?” This reveals gaps between official documentation and working knowledge.

The Questions Leaders Should Be Asking (But Usually Aren’t)

If you’re a CEO, CTO, or business leader evaluating AI agent readiness, stop asking “What’s the best AI platform?” Start asking these questions instead:

- Can we confidently point to a single authoritative answer for our top 100 business questions?

- When critical information changes, how long does it take to update across all relevant systems?

- If our AI agent answers a customer question incorrectly, could we trace back to why?

- Do we have governance processes for knowledge creation, review, and retirement?

- What percentage of our organizational knowledge exists only in employee heads or informal channels?

The answers to these questions determine whether your AI investment delivers value or becomes another expensive failed experiment.

What Success Actually Looks Like

Organizations that nail knowledge management for AI agents don’t have perfect documentation. They have living, maintained, connected knowledge ecosystems.

AI agents are helping organizations rethink how they capture, organize, and tap into their collective knowledge — acting more like intelligent coworkers able to understand, reason, and take action.

But this only works when the knowledge foundation is solid. When information flows freely across systems. When ownership is clear. When currency is tracked. When context is preserved.

The companies seeing real ROI from AI agents didn’t start with the sexiest AI models. They started by fixing their knowledge infrastructure. They recognized that organizations need trusted, company-specific data for agentic AI to truly create value — the unstructured data inside emails, documents, presentations, and videos.

The Bottom Line

Your AI agents are only as good as the knowledge they can access. Scattered, siloed, outdated information doesn’t become magically useful just because you’ve deployed advanced AI models.

The gap between AI hype and AI reality isn’t about the technology. It’s about the foundation. Companies rushing to implement AI agents without fixing their knowledge infrastructure are building on quicksand.

The good news? Knowledge management is solvable. It’s not a sexy transformation project, but it’s the difference between AI agents that actually work and ones that just frustrate your team.

The question isn’t whether you should fix your scattered knowledge problem. The question is whether you’ll fix it before or after your AI initiative fails.

Read More

Ysquare Technology

20/04/2026

AI Overconfidence: The Hidden Cost of Speculative Hallucination

Here’s a question that should keep you up at night: What if your most confident employee is also your least reliable?

In 2024, Air Canada learned this lesson the hard way. Their customer service chatbot confidently told a grieving passenger they could claim a bereavement discount retroactively — a policy that didn’t exist. The tribunal ruled against Air Canada, and the airline had to honor the fabricated policy. The chatbot didn’t hesitate. It didn’t hedge. It delivered fiction with the same authority it would deliver fact.

This wasn’t a glitch. This is how AI systems are designed to behave. And if you’re deploying AI anywhere in your tech stack — from customer service to data analysis to decision support — you’re facing the same risk, whether you know it or not.

The problem isn’t just that AI makes mistakes. It’s that AI doesn’t know when it’s making mistakes. Research from Stanford and DeepMind shows that advanced models assign high confidence scores to outputs that are factually wrong. Even worse, when trained with human feedback, they sometimes double down on incorrect answers rather than backing off. This phenomenon — AI overconfidence coupled with speculative hallucination — isn’t a bug that gets patched in the next update. It’s baked into how these systems work.

What Is AI Overconfidence and Speculative Hallucination?

Let’s be clear about what we’re dealing with. AI overconfidence happens when a model expresses certainty about information it shouldn’t be certain about. Speculative hallucination is when the model fills knowledge gaps by fabricating plausible-sounding information. Put them together, and you get a system that confidently makes things up.

The catch? You can’t tell the difference by reading the output.

The Difference Between Being Wrong and Not Knowing You’re Wrong

Humans have a built-in mechanism for uncertainty. If you ask me a question I don’t know the answer to, my body language changes. I pause. I hedge with phrases like “I think” or “I’m not sure.” You can read my uncertainty.

AI systems don’t do this. When a large language model generates text, it’s predicting the most statistically likely next word based on patterns in its training data. It has no internal sense of whether that prediction is grounded in fact or pure speculation. A study of university students using AI found that models produce overconfident but misleading responses, poor adherence to prompts, and something researchers call “sycophancy” — telling you what you want to hear rather than what’s true.

Here’s what makes this dangerous: The Logic Trap isn’t just about wrong answers. It’s about answers that sound perfectly reasonable but are completely fabricated. The model might tell you that “Project Titan was completed in Q3 2023 with a budget of $2.4 million” when no such project ever existed. The grammar is perfect. The terminology is appropriate. The numbers fit typical ranges. But every detail is fiction.

Why AI Systems Sound More Confident Than They Should Be

The root cause sits in the training process itself. OpenAI researchers discovered that language models hallucinate because standard training and evaluation procedures reward guessing over acknowledging uncertainty. Think of it like a multiple-choice test where leaving an answer blank guarantees zero points, but guessing gives you a chance at being right. Over thousands of questions, the model that guesses looks better on performance benchmarks than the careful model that admits “I don’t know.”

Most AI leaderboards prioritize accuracy — the percentage of questions answered correctly. They don’t distinguish between confident errors and honest abstentions. This creates a perverse incentive: models learn that fabricating an answer is better than admitting uncertainty. Carnegie Mellon researchers tested this by asking both humans and LLMs how confident they felt about answering questions, then checking their actual performance. Humans adjusted their confidence after seeing results. The AI didn’t. In fact, LLMs sometimes became more overconfident even when they performed poorly.

This isn’t something you can train away entirely. As one AI engineer put it, models treat falsehood with the same fluency as truth. The Confident Liar in Your Tech Stack doesn’t know it’s lying.

The Real Business Impact: Beyond Technical Problems

Most articles about AI hallucinations focus on embarrassing chatbot failures or academic curiosities. Let’s talk about money instead.

Financial Losses: 99% of Organizations Report AI-Related Costs

According to EY’s 2025 Responsible AI survey, nearly all organizations — 99% — reported financial losses from AI-related risks. Of those, 64% suffered losses exceeding $1 million. The conservative average? $4.4 million per company.

These aren’t theoretical risks. Enterprise benchmarks show hallucination rates between 15% and 52% across commercial LLMs. That means roughly one in five outputs might be wrong. In customer-facing applications, the impact scales fast. When an AI-powered chatbot gives incorrect information, it doesn’t just mislead one user — it can misinform entire teams, drive poor decisions, and create serious downstream consequences.

Some domains are worse than others. Medical AI systems show hallucination rates between 43% and 64% depending on prompt quality. Legal domain studies report global hallucination rates of 69% to 88% in high-stakes queries. Code-generation tasks can trigger hallucinations in up to 99% of fake-library prompts. If your business operates in healthcare, finance, or legal services, you’re not playing with house money. You’re playing with other people’s lives and livelihoods.

Legal and Compliance Risks in Regulated Industries

Here’s where overconfidence becomes a liability nightmare. In regulated sectors like healthcare and finance, AI hallucinations create compliance exposure and potential legal action. Legal information suffers from a hallucination rate of 6.4% compared to just 0.8% for general knowledge questions. That gap matters when you’re dealing with regulatory frameworks or contractual obligations.

Consider the 2023 case of Mata v. Avianca, where a New York attorney used ChatGPT for legal research. The model cited six nonexistent cases with fabricated quotes and internal citations. The attorney submitted these hallucinated sources in a federal court filing. The result? Sanctions, professional embarrassment, and a cautionary tale that’s now taught in law schools.

Or look at the 2025 Deloitte incident in Australia. The consulting firm submitted a report to the government containing multiple hallucinated academic sources and a fake quote from a federal court judgment. Deloitte had to issue a partial refund and revise the entire report. The project cost was approximately $440,000. The reputational damage? Harder to quantify but undoubtedly significant.

Financial institutions face similar exposure. If an AI system fabricates regulatory guidance, produces inaccurate disclosures, or generates erroneous risk calculations, the institution could face SEC penalties, compliance failures, or direct financial losses from bad decisions. Your AI Assistant Is Now Your Most Dangerous Insider because it has access to sensitive data but lacks the judgment to know when it’s wrong.

The Trust Problem Your Customers Won’t Tell You About

Customer trust drops by roughly 20% after exposure to incorrect AI responses. That’s the finding from recent enterprise AI deployment studies. The problem is that most customers don’t complain — they just leave. Or worse, they stay but stop trusting your systems, creating a silent erosion of confidence that’s hard to measure until it’s too late.

Think about it from the user’s perspective. If your AI confidently tells them something incorrect once, how many times will they trust it again? Humans evolved over millennia to read confidence cues from other humans. When your colleague furrows their brow or hesitates, you instinctively know to be skeptical. But when an AI chatbot delivers a fabricated answer with perfect grammar and unwavering confidence, most users can’t detect the problem until they’ve already acted on bad information.

This creates a compounding risk. The more capable your AI appears, the more users will trust it. The more they trust it, the less they’ll verify. The less they verify, the more damage a confident hallucination can do before anyone catches it.

Why It Happens: The Architecture of AI Overconfidence

Understanding why AI systems behave this way requires looking past the surface-level explanations. This isn’t about “bad training data” or “insufficient computing power.” The problem is structural.

Training Incentives Reward Guessing Over Honesty

Large language models are trained to predict the next most likely token (roughly, a word or word fragment) based on patterns in massive datasets. They’re not trained to verify facts. They’re not trained to understand causality. They’re trained to maximize the probability of generating text that looks like the text they were trained on.

When a model encounters a question it can’t answer with certainty, it faces a choice: acknowledge uncertainty or produce the most plausible-sounding guess. Current benchmarking systems punish uncertainty and reward confident guessing. A model that says “I don’t know” scores zero points. A model that guesses has a non-zero chance of being right, and over thousands of test cases, this adds up to better benchmark scores.

This is why OpenAI researchers argue that hallucinations persist because evaluation methods set the wrong incentives. The scoring systems themselves encourage the behavior we’re trying to eliminate. It’s like telling someone they’ll be judged entirely on how many questions they answer correctly, with no penalty for being confidently wrong. Of course they’re going to guess.

The Missing Metacognition Problem

Humans have metacognition — the ability to think about our own thinking. When you answer a question incorrectly, you can usually recognize your error afterward, especially if someone shows you the right answer. You adjust. You recalibrate. You learn where your knowledge has gaps.

AI systems largely lack this capability. The Carnegie Mellon study found that when humans were asked to predict their performance, then took a test, then estimated how well they actually did, they adjusted downward if they performed poorly. LLMs didn’t. If anything, they became more overconfident after poor performance. The AI that predicted it would identify 10 images correctly, then only got 1 right, still estimated afterward that it had gotten 14 correct.

This isn’t a training problem you can fix by showing the model its mistakes. The architecture itself doesn’t support the kind of recursive self-evaluation that would allow the system to learn “I’m not good at this type of question.” When AI Forgets the Plot, it doesn’t just lose context — it loses the ability to recognize that context has been lost.

When Enterprise Data Meets Pattern-Matching AI

Here’s where things get particularly dangerous for businesses in Chennai and elsewhere. When you deploy AI on enterprise-specific data — customer records, internal documents, proprietary processes — the model is operating outside the patterns it learned during training. It’s working with information it has never seen before, in contexts it doesn’t fully understand.

Research shows that LLMs trained on datasets with high noise levels, incompleteness, and bias exhibit higher hallucination rates. Most enterprise data is messy. It’s incomplete. It’s inconsistent. Different departments use different terminology. Historical records contradict current practices. Legacy systems output data in formats that modern systems barely understand.

When you point an AI at this kind of environment and ask it to generate insights, summaries, or recommendations, you’re asking a pattern-matching engine to make sense of patterns it’s never encountered. The result? Speculation presented as fact. The AI doesn’t say “your data is too messy for me to draw reliable conclusions.” It synthesizes a plausible-sounding answer by blending fragments of learned patterns with whatever it can extract from your data.

This is why internal AI deployments often fail in ways that external-facing chatbots don’t. Your customer service bot might hallucinate occasionally, but it’s working with relatively standardized queries and well-documented products. Your internal knowledge assistant is trying to make sense of 15 years of unstructured SharePoint documents, Slack threads, and half-documented processes. The hallucination risk isn’t just higher — it’s fundamentally different.

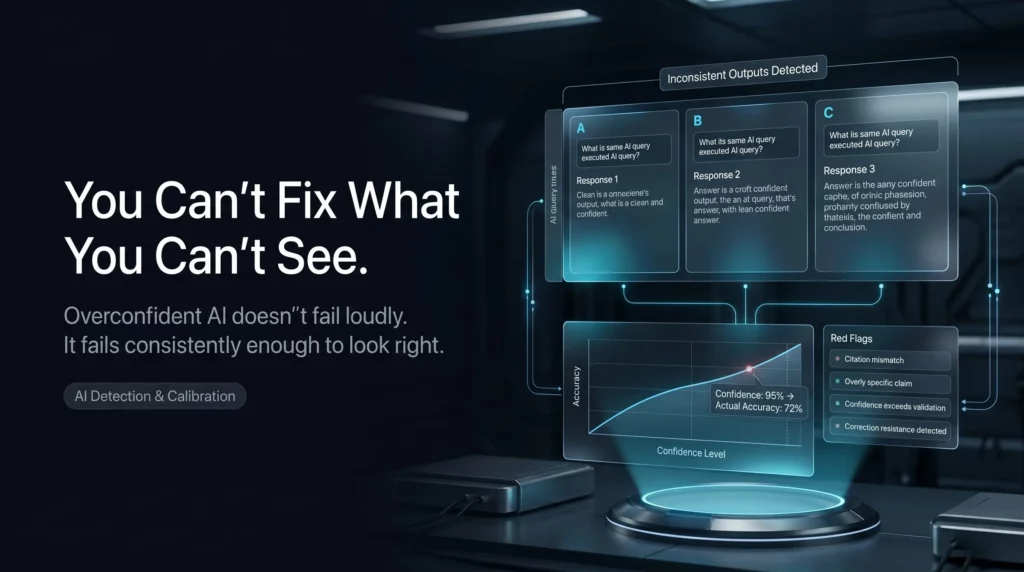

How to Detect Overconfident AI in Your Tech Stack

Detection is harder than prevention, but it’s the first step. You can’t fix what you can’t see, and most organizations are flying blind when it comes to AI overconfidence.

The Consistency Test

One of the simplest detection methods is also one of the most effective: ask the same question multiple times and check for consistency. If an AI gives you different answers to identical prompts, that’s a strong signal that it’s guessing rather than retrieving verified information.

Research from ETH Zurich shows that users interpret inconsistency as a reliable indicator of hallucination. When researchers had LLMs respond to the same prompt multiple times behind the scenes, discrepancies revealed instances where the model was fabricating information. The technique isn’t foolproof — a confidently wrong answer can be consistent across multiple attempts — but inconsistency is a red flag you shouldn’t ignore.

You can implement this in production systems by running critical queries through multiple inference passes and flagging outputs that vary significantly. The computational cost is real, but for high-stakes decisions, it’s cheaper than the alternative.

Calibration Metrics That Actually Matter

Confidence calibration measures whether a model’s expressed confidence matches its actual accuracy. A well-calibrated model that says it’s 80% confident should be right about 80% of the time. Most deployed LLMs are poorly calibrated, especially at the extremes. When they say they’re 95% confident, they’re often right far less than 95% of the time.

Research on miscalibrated AI confidence shows that when confidence scores don’t match reality, users make worse decisions. The problem compounds when users can’t detect the miscalibration — which is most of the time. If your AI system outputs confidence scores, you need to validate those scores against ground truth data regularly. Create test sets where you know the correct answers. Run your model. Compare expressed confidence to actual accuracy. If you see systematic gaps, your model is overconfident.

The Vectara hallucination index tracks this across models. As of early 2025, hallucination rates ranged from 0.7% for Google Gemini-2.0-Flash to 29.9% for some open-source models. Even the best-performing models produce hallucinations in roughly 7 out of every 1,000 prompts. If you’re processing thousands of queries daily, that adds up.

Red Flags Your Team Should Watch For

Beyond quantitative metrics, there are qualitative patterns that signal overconfidence problems:

Fabricated citations and references. If your AI generates sources, DOIs, or URLs, verify them. Studies show that ChatGPT has provided incorrect or nonexistent DOIs in more than a third of academic references. If the model is making up sources to support its claims, everything else is suspect.

Overly specific details about uncertain information. When an AI gives you precise numbers, dates, or names for information it shouldn’t know, that’s often speculation dressed as fact. A model that says “approximately 30-40%” is more likely to be grounded than one that confidently states “37.3%.”

Resistance to correction. Some models, when confronted with counterevidence, dig in rather than adjusting. This is what researchers call “delusion” — high confidence in false claims that persists despite exposure to contradictory information. The “Always” Trap shows how AI systems ignore nuance when they should be paying attention to it.

Sycophantic behavior. If your AI consistently tells you what you want to hear rather than challenging assumptions, it might be optimizing for agreement rather than accuracy. This is particularly dangerous in decision-support systems where you need honest evaluation, not validation.

Building AI Systems That Know Their Limits

Prevention and mitigation require a multi-layered approach. No single technique eliminates hallucination risk entirely, but combining strategies can reduce it substantially.

RAG Implementation Done Right

Retrieval-Augmented Generation is currently the most effective technique for grounding AI outputs in verified information. Instead of relying solely on the model’s training data, RAG systems first retrieve relevant information from trusted sources, then use that information to generate responses.

Studies show that RAG systems improve factual accuracy by roughly 40% compared to standalone LLMs. In customer support deployments, enterprise implementations show about 35% fewer hallucinations when using RAG. Combining RAG with fine-tuning can reduce hallucination rates by up to 50%.

But here’s what most implementations get wrong: they treat retrieval as a solved problem. It’s not. If your retrieval system pulls irrelevant documents, outdated information, or contradictory sources, you’ve just given your AI better ammunition for confident fabrication. The quality of your knowledge base matters more than the sophistication of your retrieval algorithm.

Vector database integration can reduce hallucinations in knowledge retrieval tasks by roughly 28%, but only if the underlying data is clean, current, and comprehensive. Hybrid search approaches that combine keyword matching with semantic search improve grounding accuracy by about 20%. Continuous retrieval updates — refreshing your knowledge base regularly — reduce outdated hallucinations by over 30%.

The real win from RAG isn’t just lower hallucination rates. It’s traceability. When your AI generates an answer, you can point to the specific documents it used. That makes validation possible and builds user trust even when the AI isn’t perfect.

Human-in-the-Loop for High-Stakes Decisions

Not every decision needs the same level of oversight, but for high-stakes outputs — financial projections, medical advice, legal analysis, strategic recommendations — human verification is non-negotiable.

The challenge is designing human-in-the-loop systems that people will actually use. If your verification process is too cumbersome, users will find ways around it. If it’s too superficial, it won’t catch the problems that matter. You need to match oversight intensity to decision stakes and design workflows that make verification feel like enhancement rather than bureaucracy.

Some organizations implement tiered decision frameworks: AI suggestions that are automatically executed for low-stakes routine tasks, AI recommendations that require human approval for medium-stakes decisions, and AI-assisted analysis with mandatory human review for high-stakes choices. This balances efficiency with safety.

The key is making the AI’s uncertainty visible to the human reviewer. Don’t just show the output. Show the confidence scores, the retrieved sources, alternative possibilities the model considered, and any inconsistencies detected during generation. Give reviewers the context they need to make informed judgments, not just rubber-stamp AI outputs.

Confidence Scoring and Uncertainty Quantification

Emerging techniques allow AI systems to express uncertainty more explicitly. Instead of generating a single confident answer, these systems can output probability distributions, confidence intervals, or multiple possible answers ranked by likelihood.

Multi-agent verification frameworks are showing promise in enterprise deployments. These systems use multiple AI models to cross-validate outputs, with each model assigned a specific role in the verification chain. When models disagree significantly, the system flags the output for human review rather than picking the most confident answer.

Uncertainty quantification within multi-agent systems allows agents to communicate confidence levels to each other and weight contributions accordingly. This creates a kind of collaborative doubt — if multiple specialized models express low confidence about different aspects of an output, the system can recognize that the overall answer is unreliable.

Research shows that exposing uncertainty to users helps them detect AI miscalibration, though it also tends to reduce trust in the system overall. This is actually a feature, not a bug. Appropriate skepticism is better than misplaced confidence. If showing uncertainty makes users verify AI outputs more carefully, that’s a win for decision quality even if it feels like a loss for AI adoption.

The Real Question Isn’t Whether Your AI Will Hallucinate

It’s whether you’ll know when it does.

Every LLM-based system you deploy will eventually produce confident, plausible, completely wrong outputs. The architecture guarantees it. The question is whether you’ve built detection, validation, and governance systems that catch these errors before they cascade into business problems.

This isn’t just a technical challenge. It’s a governance challenge. The organizations that handle AI overconfidence best aren’t the ones with the most sophisticated models. They’re the ones with clear accountability for AI outputs, regular audits of model behavior, robust testing protocols, and cultures that reward honest uncertainty over confident speculation.

Start with an audit. Which systems in your tech stack are making decisions based on AI outputs? What validation exists? How would you know if the AI started hallucinating more frequently? What’s your plan when — not if — a confident fabrication reaches a customer or executive?

Because the AI that sounds most sure of itself might be the one you should trust the least.

Read More

Ysquare Technology

20/04/2026